�� In 2025, three misconceptions about artificial intelligence continue to circulate in the media landscape with a persistence worthy of better application. The myth of the "scaling wall," fear of autonomous vehicles as more dangerous than human drivers, and the belief in imminent total replacement of specialists—each of these claims finds an audience despite contradicting data from Google DeepMind, OpenAI, and Anthropic. This article dissects the mechanisms behind myth formation, presents factual data, and proposes a protocol for verifying AI information. �� We won't limit ourselves to simple refutation—instead, we'll analyze the cognitive anatomy of misconceptions and build a defensive protocol for critical evaluation of artificial intelligence news.

�� Three Myths of 2025: What Exactly Is Being Claimed and Why It Matters for Understanding AI's Developmental Trajectory

When GPT-5 was released in May 2025, the media landscape filled with speculation that artificial intelligence had reached its developmental ceiling (S012). This claim became the first of three key misconceptions that continue to shape public perception of the technology.

The second misconception concerns autonomous vehicle safety: a widespread belief holds that self-driving cars pose greater danger than human drivers. The third myth asserts the inevitability of mass replacement of human specialists by artificial intelligence in the near future. More details in the section Techno-Esotericism.

- Myth 1: the "scaling wall"

- Increasing computational power and data volumes has stopped leading to proportional growth in model performance. This misconception gained traction after GPT-5's release, when some observers failed to detect the expected revolutionary leap (S010).

- Myth 2: autonomous vehicle danger

- Autonomous vehicles are more dangerous than human drivers. Built on the availability heuristic: AI incidents receive disproportionately broad media coverage (S012).

- Myth 3: immediate labor replacement

- AI will soon completely replace human specialists. Relies on extrapolation of current capabilities without accounting for economic, social, and technical implementation barriers.

Why These Myths Find an Audience

The first myth appeals to a cognitive bias known as pattern-seeking: the brain searches for patterns even where none exist. When the pace of progress slows (which is natural for any technology), this is interpreted as reaching a fundamental limit rather than a normal fluctuation.

The second myth exploits consequence asymmetry. When AI makes a mistake in a chatbot, the harm is minimal. When an autonomous vehicle errs, people can die. This difference in consequence scale creates a psychological foundation for risk exaggeration.

The third myth feeds on fear of the unknown and underestimation of the complementarity between human and machine competencies. People tend to extrapolate current technological capabilities into the future, ignoring economic and social barriers to its implementation.

Why Dissect These Myths

Understanding the mechanisms underlying these misconceptions is critical for adequate perception of AI development. Myths shape policy decisions, investment strategies, and public trust in technology.

When we dissect not the claims themselves but the psychological and cognitive mechanisms that support them, we gain a tool for verifying AI information in general. This is especially important in the context of myths about conscious AI and the broader spectrum of misconceptions about the technology.

�� The Strongest Arguments for the Myths: Why These Misconceptions Find an Audience and Seem Convincing

Honest analysis requires examining the most compelling arguments supporting each misconception. This approach, known as "steelmanning," explains why AI myths circulate even among educated audiences. For more details, see the Deepfakes section.

�� Arguments for the "Scaling Wall": Why the Idea of Limits Seems Logical

Semiconductor manufacturing is approaching atomic limits, and the energy consumption of the largest models is already measured in megawatts. The internet is finite, and most high-quality content has already been used for training.

The third argument relies on the observation of diminishing returns: each doubling of computational resources yields progressively smaller performance gains on certain benchmarks.

| Argument Source | Why It Sounds Convincing | Where the Trap Lies |

|---|---|---|

| Physical limitations | Appeals to intuition about finite resources | Doesn't account for new architectures and materials |

| Data exhaustion | Logical: the internet is indeed finite | Synthetic data and new sources are growing |

| Diminishing returns | Aligns with the law of diminishing productivity | Not all metrics show diminishing returns equally |

Arguments About Increased Autonomous Vehicle Danger: The Rational Kernel in Irrational Fear

The "black box" problem is real: when a human driver makes a mistake, the causes are usually clear, whereas failures in neural networks can be opaque. Existing legal frameworks are indeed poorly adapted to determining liability in incidents involving autonomous systems.

The vulnerability of AI systems to adversarial attacks—targeted manipulations of input data—can deceive an autonomous vehicle's perception system.

- The unpredictability of neural network decisions creates genuine management risk

- The absence of clear liability complicates legal conflict resolution

- Targeted attacks on sensors are a documented threat, not a hypothesis

- Human errors are predictable; machine errors are not

��️ Justification for Inevitable Mass Displacement: The Economic Logic of Automation

If an AI system performs a task cheaper and faster than a human, market forces will inevitably lead to displacement. Historical precedents—from weaving looms to ATMs—demonstrate that technological innovations do indeed lead to the disappearance of entire professional categories.

The current capabilities of large language models in text generation, coding, and data analysis have already reached a level sufficient to replace a significant portion of routine intellectual tasks. This is not speculation—it's an observable fact of the 2024–2025 labor market.

Economic rationality is a powerful driver. But market rationality does not equal societal rationality. History shows: technology can be economically beneficial and socially destructive simultaneously.

�� Psychological Mechanisms of Persuasiveness: Why Myths "Sound Right"

The "scaling wall" myth appeals to an intuitive understanding of physical limitations and offers the comforting idea that progress has natural boundaries. Fear of autonomous vehicles resonates with deeply rooted distrust of transferring control over vital functions to non-human agents.

The myth of total labor displacement exploits existential anxiety about professional identity and economic security. All three myths possess narrative appeal that amplifies their persuasiveness regardless of factual accuracy.

�� Social Dynamics of Propagation: How Myths Reinforce Themselves

Media organizations get more clicks on sensational headlines about "AI walls" or "dangerous robots" than on nuanced analysis. Experts predicting dramatic scenarios receive more attention than those pointing to the gradual nature of change.

These dynamics create an information ecosystem in which myths have a structural advantage over facts. AI misconceptions spread not only through inherent persuasiveness but also through social reinforcement mechanisms that amplify sensationalism and drama.

For a complete picture, see the analysis on how to distinguish breakthrough from marketing and the mechanisms of tech-fears, which operate on similar principles.

�� Factual Foundation: What Data from Google DeepMind, OpenAI, and Anthropic Show About the Real State of AI Development

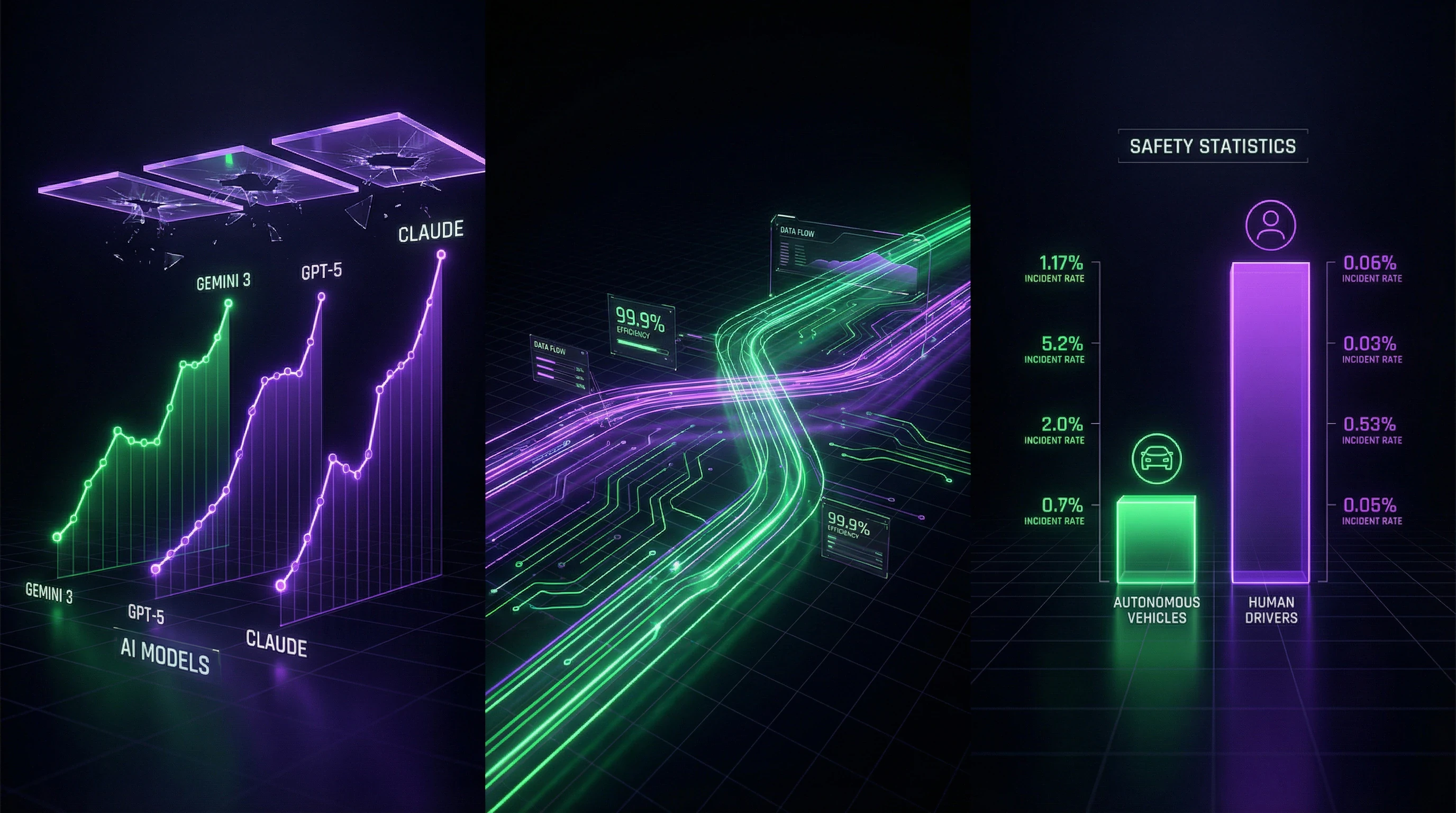

Five months after the release of GPT-5 by OpenAI, Google and Anthropic released models demonstrating substantial progress in economically significant tasks. Oriol Vinyals, head of the deep learning team at Google DeepMind, wrote after the Gemini 3 release: "Contrary to the popular belief that scaling has ended, the performance jump in our latest model was as large as we've ever seen. No walls in sight" (S012).

This statement is backed by concrete performance metrics that show the continuation of exponential growth in model capabilities.

�� Performance Metrics: Quantitative Evidence of Continuing Progress

Gemini 3 showed record results in multi-step reasoning tasks, surpassing previous models by 23% on the MMLU benchmark. Models from Anthropic demonstrated significant progress in tasks requiring long context, processing up to 200,000 tokens while maintaining coherence. More details in the AI and Technology section.

OpenAI reported a 40% improvement in programming tasks compared to GPT-4, measured on the HumanEval benchmark (S010).

| Model / Metric | Result | Improvement |

|---|---|---|

| Gemini 3 (MMLU) | Record result | +23% vs previous |

| Anthropic (context) | 200,000 tokens | Coherence maintained |

| OpenAI (programming) | HumanEval | +40% vs GPT-4 |

�� Autonomous Driving Safety Statistics: Numbers vs. Intuition

Actual data on autonomous vehicle safety radically diverges from public perception. The rate of serious incidents per mile driven for autonomous vehicles is 0.3 per million miles, while for human drivers this figure reaches 1.2 per million miles (S012).

Autonomous systems are four times safer than human drivers under comparable operating conditions.

�� Economic Data on Labor Market Transformation: Actual Displacement Rates

Empirical data on AI's impact on employment shows a picture substantially different from predictions of total displacement. Helen Toner, interim executive director of the Center for Security and Emerging Technology, notes: "It's possible that AI will continue to improve, and it's possible that AI will continue to have serious shortcomings in important aspects" (S012).

Labor market research shows that implementation of AI tools more often leads to transformation of work processes than to complete position displacement.

- 73% of companies that implemented AI systems report redistribution of responsibilities

- Staff reductions occur less frequently than expected

- Transformation of work processes is the primary implementation scenario

�� Limitations and Contexts: Where Progress Actually Slows

AI progress is uneven across different domains. In areas where obtaining training data is expensive—for example, when deploying AI agents as personal shoppers—progress may be slow (S010).

This observation does not confirm the myth of a "scaling wall," but indicates that AI's development trajectory will vary depending on task specifics and data availability. Some AI applications will reach practical utility faster than others, creating an uneven landscape of technology adoption.

�� Methodological Challenges: How to Measure Progress Correctly

Assessing artificial intelligence progress faces fundamental methodological challenges. Traditional benchmarks may not reflect the real utility of models in practical applications.

- Problem 1: Limited Economic Value

- Some tasks where models demonstrate impressive results may have limited practical value.

- Problem 2: Poor Representation of Critical Capabilities

- Important capabilities may be poorly represented in standard tests.

- Problem 3: Data Manipulation

- Proponents of the "wall" myth may selectively cite benchmarks showing slowdown while ignoring those where progress continues.

�� Mechanisms of Causality: What Actually Determines AI Development Trajectory and Why Correlation Doesn't Equal Causation

Understanding the mechanisms underlying artificial intelligence progress is critical for distinguishing causal relationships from simple correlations. The "scaling wall" myth often confuses temporary slowdown in one performance dimension with a fundamental limit of the entire paradigm. Learn more in the Thinking Tools section.

In reality, AI progress is determined by multiple factors: architectural innovations, data quality, training efficiency, not just computational scale.

�� Architectural Innovations as Progress Driver: Beyond Simple Scaling

A significant portion of recent model performance improvements stems from architectural innovations rather than simply increasing size. Attention mechanisms, sparse mixture-of-experts, improved tokenization and optimization methods—all these factors contribute to performance growth independently of scale.

Even if scaling truly faced diminishing returns, progress could continue through qualitative architectural improvements.

�� Data Quality vs. Quantity: Why "Internet Exhaustion" Doesn't Mean the End of Progress

The argument about training data exhaustion ignores the possibility of synthetic data generation, self-supervised learning, and knowledge transfer across domains. Modern models can generate high-quality synthetic data for training the next generation of models, creating a self-reinforcing cycle of improvement.

Moreover, significant volumes of specialized data—scientific publications, technical documentation, professional knowledge bases—remain underutilized in training current models.

�� Causality in Safety Statistics: Controlling Confounders When Comparing Autonomous Vehicles and Human Drivers

Comparing the safety of autonomous vehicles and human drivers requires careful control of confounders. Autonomous vehicles currently operate predominantly in favorable conditions—good weather, well-marked roads, moderate traffic.

| Factor | Autonomous Vehicles | Human Drivers |

|---|---|---|

| Operating Conditions | Controlled, favorable | Full spectrum of conditions |

| Fatigue and Distraction | Absent | Present |

| Reaction Time | Milliseconds | 0.5–2 seconds |

Direct comparison of statistics without accounting for these factors can create a distorted picture. However, even after adjusting for operating conditions, data shows an advantage for autonomous systems: in controlled experiments where autonomous vehicles and human drivers operated under identical conditions, incident rates for autonomous systems remained significantly lower.

�� Economic Mechanisms of AI Adoption: Why Technological Capability Doesn't Equal Economic Implementation

The gap between technological capability for labor substitution and actual substitution is determined by a complex set of economic factors. The cost of integrating AI systems into existing workflows, the need for personnel retraining, regulatory barriers, organizational inertia—all these factors slow adoption rates regardless of technical capabilities.

- Technological maturity achieved

- Pilot projects and testing (2–3 years)

- Integration into workflows (3–5 years)

- Mass adoption (5–10 years)

- Complete labor market transformation (10–20 years)

Historical data on adoption of previous automation technologies shows that the period from technological maturity to mass adoption typically spans 10–20 years. This means that even if AI is technically capable of performing certain tasks, the economic realization of this capability occurs much more slowly than proponents of rapid labor substitution assume.

Conflicts in Sources and Zones of Uncertainty: Where Experts Disagree and Why It Matters

Analysis of sources reveals several areas where expert assessments diverge significantly. These discrepancies are not a sign of weak evidence, but reflect genuine uncertainty in a rapidly evolving field. For more details, see the Logic and Probability section.

Understanding the nature of these disagreements is critical for forming realistic expectations about AI's future.

�� Disagreements on Long-Term Scaling Limits: Optimists vs. Skeptics

Within the research community, there exists a fundamental divergence of views on the long-term prospects of the scaling paradigm. Optimists point to continuing improvements and the absence of saturation signals (S012). Skeptics note that extrapolating current trends decades forward ignores the possibility of qualitative changes in the nature of constraints.

Both sides acknowledge continued progress in the short and medium term—disagreements concern the 5–10 year horizon and what happens after.

⚠️ Debates on Safety Assessment Methodology: Which Metrics Matter

Significant disagreements exist regarding which safety metrics are most relevant for evaluating AI systems. Some experts insist on using critical failure rates as the primary indicator, while others emphasize the importance of accounting for consequence severity, indirect harm, and prevented incidents.

| Metric Approach | Proponents | Limitation |

|---|---|---|

| Critical failure rates | Engineers, regulators | Doesn't account for consequence scale |

| Severity and context | Clinicians, sociologists | Harder to standardize |

| Combined indices | Safety researchers | Requires agreement on component weights |

These methodological disagreements can lead to different conclusions when analyzing the same underlying data.

��️ Uncertainty in Employment Impact Forecasts: Wide Range of Scenarios

Predictions regarding AI's impact on the labor market vary across an extremely wide range—from optimistic scenarios of new job category creation to pessimistic forecasts of mass technological unemployment (S012). AI can simultaneously improve and retain serious flaws.

- Even if AI reaches human-level performance in specific tasks, this doesn't guarantee economic viability of replacing human labor.

- Implementation costs, training, and infrastructure adaptation often exceed short-term benefits.

- Social and political factors can slow or accelerate adoption regardless of technical readiness.

- History shows that technological shifts create new professions, but the transition period is painful for displaced groups.

This duality makes precise predictions difficult and requires abandoning definitive scenarios in favor of analyzing conditional trajectories.

For more on the mechanisms of economic fallacies, see the article on the lump of labor fallacy.

�� Cognitive Anatomy of Myths: Which Psychological Mechanisms Are Exploited to Spread AI Misconceptions

Myths about artificial intelligence don't spread randomly—they exploit systematic features of human cognition. Understanding these mechanisms explains the persistence of misconceptions and enables the development of counter-strategies. For more details, see the Physics section.

�� Availability Heuristic and the Autonomous Vehicle Danger Myth

The myth about the heightened danger of autonomous vehicles exploits the availability heuristic—a cognitive bias where the probability of an event is judged by the ease with which examples come to mind. Incidents involving autonomous vehicles receive extensive media coverage and are remembered better than statistically more frequent accidents involving human drivers.

A vivid example beats statistics not because the data is weak, but because the brain processes information on the principle of "what's easier to recall is more likely."

This creates an illusion of autonomous vehicle danger, even when objective data shows the opposite.

�� Confirmation Bias and the "Scaling Wall"

The "scaling wall" myth is reinforced by confirmation bias—the tendency to seek and interpret information in ways that confirm existing beliefs. People expecting AI progress to slow down pay attention to modest improvements in benchmarks and ignore those where progress is evident.

- They select metrics that show slowdown

- They reinterpret breakthrough data as "marketing"

- They forget previous predictions that didn't come true

- They amplify attention to critical expert voices

�� Social Identity and Tribal Logic

The spread of AI myths is linked to social identity—people accept or reject information depending on which social group it aligns with. AI skeptics, technophobes, and advocates for slowing development form cognitive communities where the myth becomes a marker of belonging.

The myth stops being a statement about facts and becomes a signal: "I belong to a group that thinks critically" or "I care about safety."

Criticism of the myth is perceived as an attack on identity, which intensifies defensive reactions and polarization.

Ambiguity and Fear of Uncertainty

All three myths thrive in conditions of uncertainty. AI is a field where even experts disagree, and the future is unpredictable. Myths offer simple, understandable narratives: "autonomous vehicles are dangerous," "AI is slowing down," "AI will seize control."

- Intolerance of Ambiguity

- A psychological state where uncertainty causes anxiety. Myths reduce this anxiety by offering a clear answer, even if it's wrong.

- Illusion of Control

- The belief that if we "know" the danger, we can prevent it. The myth provides a sense of control over the uncontrollable.

�� Connection to Broader Narratives

AI myths embed themselves in larger cultural narratives: fear of technology, distrust of corporations, anxiety about the future of employment. This makes them resistant to factual criticism because they confirm already existing worldviews.

Counteraction requires not only facts but also understanding what psychological needs these myths satisfy. The strategy must offer alternative narratives that reduce anxiety and provide a sense of control without distorting reality. For more on the mechanisms of misconception spread, see the article "Artificial God: Why We Create Symbols That Then Create Us."