🧠 Myths About Conscious AI

🧠 Myths About Conscious AIDebunking Myths About Artificial Intelligence and Technologyλ

From historical misconceptions to modern technological myths — a critical analysis of common perceptions about AI and their influence on public understanding

Overview

Artificial intelligence is surrounded by myths — from "conscious machines" to "replacement of all professions." Like Vikings in horned helmets or Nero with a torch: 🧠 popular culture creates images far removed from reality. These misconceptions influence investments, regulation, and mass perception of technology.

🛡️

The Laplace Protocol: Systematic verification of common claims about artificial intelligence through the lens of scientific data, historical context, and interdisciplinary analysis to separate facts from fiction.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Navigation Matrix

Subsections

[conscious-ai-myths]

Myths About Conscious AI

Exploring common misconceptions about the nature of consciousness in AI, separating scientific facts from popular myths and media exaggerations of the modern technological era

Explore

[techno-esoterica]

Techno-Esotericism

An exploration of the intersection between electronic music, technological practices, and esoteric traditions — from Detroit's techno roots to contemporary digital rituals of consciousness transformation

Explore

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI 🧠 Myths About Conscious AI

🧠 Myths About Conscious AI⚡

Deep Dive

The Nature of Myths: From Ancient Times to the Digital Era — Why Our Brains Create Illusions About AI

Definition and Functions of Myths in Society

A myth is a traditional narrative that explains the origin of phenomena through supernatural events. In the context of technology, myths simplify complex concepts into understandable but often distorted narratives.

Myths serve an educational function across all cultures and time periods. Modern AI myths help people cope with the cognitive load of rapid progress by offering simple explanations for complex systems — but this simplifying function often leads to persistent misconceptions about actual capabilities and limitations.

AI myths operate on the same principle as ancient myths: they fill gaps in understanding when information is unavailable or too complex for quick comprehension.

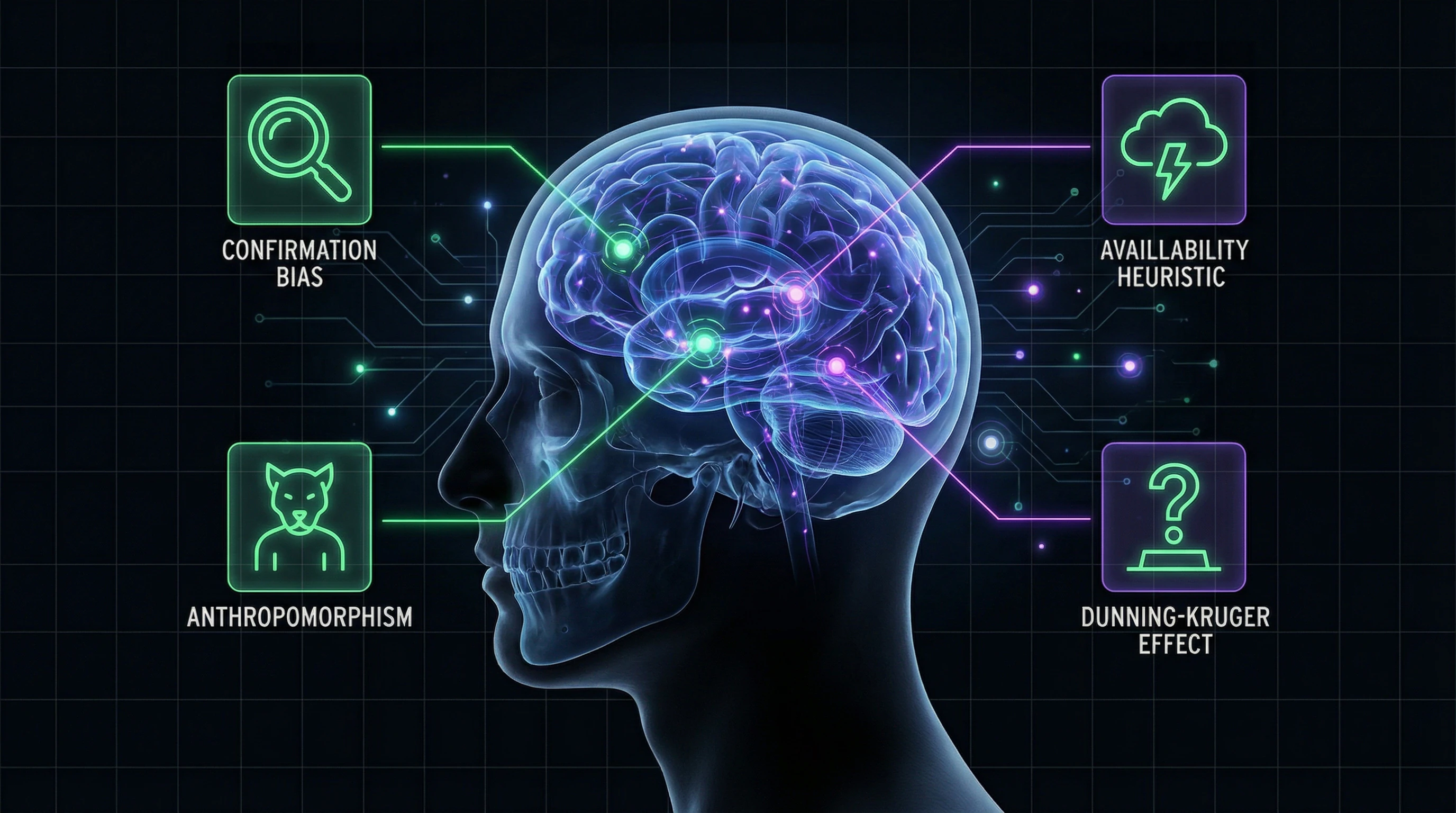

Mechanisms of Technological Misconception Formation

The human brain is prone to anthropomorphization — attributing human qualities to non-human objects. Historical misconceptions, such as the myth of horned Viking helmets or Nero setting fire to Rome, demonstrate the persistence of false narratives even in the face of contradictory evidence.

These same cognitive bias mechanisms operate today: people attribute consciousness, intentions, and capabilities to AI systems that they do not possess.

| Bias Mechanism | Manifestation in Ancient Myths | Manifestation in AI Myths |

|---|---|---|

| Anthropomorphism | Gods with human emotions and flaws | Chatbot understands and empathizes like a human |

| Sensationalism | Dramatized stories of heroes and catastrophes | Headlines about AI apocalypse instead of technical details |

| Information Vacuum | Absence of written sources in oral culture | Deficit of peer-reviewed research in public discourse |

- Anthropomorphism in AI Perception

- The brain automatically applies human categories to unfamiliar objects. When a chatbot responds coherently, we intuitively assume the presence of understanding and intention — even though the system operates on statistical patterns.

- Media Sensationalism

- Technological myths are amplified through feedback loops: sensational headlines receive more attention than accurate technical explanations. Popular misconceptions spread faster than scientifically grounded information.

- Primary Source Deficit

- The absence of peer-reviewed research in public discourse leaves an information vacuum that becomes filled with speculation and reinterpretation.

The mixing of sources about mythology and AI technologies creates additional confusion in the information space, where the boundary between fact and fiction becomes blurred.

Top 10 Historical Myths and Their Parallels with AI Misconceptions

Viking Horned Helmets and AI Anthropomorphization

The myth of Vikings wearing horned helmets is a classic example of visual dramatization displacing historical accuracy. Archaeological evidence does not support the use of such helmets in battle.

Modern depictions of AI as humanoid robots with "eyes" and "faces" operate on the same logic. Popular culture simplifies generative AI into anthropomorphic images, distorting understanding of how neural networks and machine learning algorithms actually work.

| Parameter | Vikings and Horned Helmets | AI and Anthropomorphism |

|---|---|---|

| Source of Myth | Visual dramatization in art and film | Science fiction and popular culture |

| What Gets Displaced | Historical accuracy | Technical reality of neural networks |

| Consequence | Distorted understanding of history | Inflated expectations and inadequate risk assessment |

Anthropomorphization leads to overlooking real problems. When people perceive AI as a "thinking machine" with intentions, they miss data bias, limitations in contextual understanding, and absence of true comprehension.

Improvements in AI image generation quality represent technical progress in pattern processing, not development of "creative consciousness." This distinction is critical for adequate assessment of capabilities and limitations.

The Nero Myth and Fears of Autonomous Systems

The historical myth that Nero set fire to Rome remains a disputed claim. It persists due to the dramatic narrative of a malevolent ruler, not because of historical evidence.

Modern fears of "machine uprising" and autonomous AI systems follow the same logic: dramatizing potential risks while ignoring technical realities. Panic based on science fiction scenarios prevents constructive discussion of actual problems.

Scientific community consensus: current AI systems do not possess autonomous goals or intentions. Real risks relate to how people use these tools.

Algorithmic discrimination, information manipulation, concentration of power in tech corporations—these are what require attention. The Nero myth persisted for centuries due to lack of critical source analysis; modern AI myths spread due to insufficient technical literacy and prevalence of sensational narratives.

Sparta and the Myth of "Perfect AI"

Simplified historical narratives about Sparta create a myth of a "perfect selection system," ignoring the complexity of actual Spartan culture. Similarly, the myth of "perfect AI" ignores fundamental limitations of any machine learning system.

- Creator bias. Every algorithm carries the assumptions and values of its developers. This is not a bug but a built-in feature of any human-created system.

- Data distortions. Training data contains historical and social biases. AI does not correct them—it reproduces and scales them.

- Objectivity as illusion. No system can be "objective" by definition. Open collaboration is important but does not guarantee perfection.

The myth of perfection—in both cases—arises from the desire to find simple solutions to complex problems. History shows: such solutions always have a hidden cost.

Myths About Modern AI Capabilities — Where Reality Ends

Generative AI and the Illusion of Creativity

Generative AI systems create new content — text, images, code — based on training data and prompts. But the myth that they "create" in the human sense is fundamentally incorrect.

AI lacks intentionality, aesthetic judgment, or understanding of cultural context. It performs statistical interpolation of patterns from training data — a technical achievement in algorithm optimization, not the development of machine creativity.

The myth of "creative AI" is dangerous: it devalues the work of artists, writers, and designers by presenting their work as fully automatable. Reality: generative systems are tools that extend human capabilities, but do not replace the fundamental cognitive processes of creativity and cultural interpretation.

The development of generative AI requires interdisciplinary approaches, which underscores its instrumental nature. This is technology that requires human expertise across various domains for effective application.

Limits of Machine Learning and the Myth of Omniscient AI

Modern AI systems perform tasks that typically require human intelligence: visual perception, speech recognition, decision-making, language translation. But the myth of "omniscient AI" that solves any problem encounters fundamental limitations.

Machine learning systems only work within the distribution of data on which they were trained. They cannot truly generalize knowledge beyond that distribution. Research consensus indicates: modern AI is far from artificial general intelligence.

| Limitation | Consequence |

|---|---|

| Optimization for specific tasks | Lack of universality |

| Absence of logical reasoning | Dependence on patterns in data |

| Requires constant adjustment | Full autonomy impossible |

| Cannot generalize beyond training | Non-functional on new distributions |

The mixing of information sources about mythology and AI technologies in public discourse creates confusion between ancient narratives and modern realities. The absence of primary academic sources in popular AI discussions leads to the spread of simplified representations that do not reflect the complexity of real systems.

Misconceptions About AI Risks and Threats — Why Apocalyptic Scenarios Overshadow Real Problems

Apocalyptic Scenarios versus Real Risks of Current Systems

Popular culture has created a persistent narrative about AI's existential threat to humanity. Real risks are connected to data bias, algorithmic opacity, and socioeconomic consequences of automation.

Conflating science fiction scenarios with technical limitations of current systems diverts attention from pressing issues — discrimination in hiring algorithms, lending, content moderation. Current AI systems lack autonomous goal-setting and remain tools whose quality depends entirely on training data and human oversight.

| Real Risks | Hypothetical Threats | Consequence |

|---|---|---|

| Data bias, decision opacity, amplification of social inequalities | Superintelligent agent uprising, existential scenarios | Resources go to philosophical debates instead of developing verification and audit methods |

Empirical data confirms: the most significant risks manifest in amplifying existing inequalities through automated decision-making systems. Concrete technical problems of generative AI — model hallucinations, reproduction of toxic content, vulnerability to adversarial attacks — require immediate attention.

Ethical Dilemmas and Their Exaggeration in Public Discourse

Media coverage often exaggerates philosophical dilemmas like the "trolley problem" for autonomous vehicles. More mundane but critically important questions are ignored: transparency, accountability, fairness of algorithmic systems.

The public worries about the future, not the present — the gap between theory and practice creates a paradox where real ethical violations in existing systems remain without proper attention.

Academic discussions about AI ethics focus on hypothetical scenarios, while popular perceptions amplify fear of existential threats. Research centers develop interdisciplinary approaches, focusing on practical mechanisms: ensuring fairness, explainability of decisions, protecting user privacy.

These tools apply to systems already affecting millions of lives through recommendations, moderation, and automated management. Shifting focus from apocalyptic scenarios to current problems isn't denial of future risks, but recognition of priority: first address what's working incorrectly now.

The Role of MIT and Academic Institutions in Debunking Myths — How Research Shapes Realistic Understanding of Technologies

Research in AI System Transparency and Explainability

MIT and other academic centers systematically dismantle the myth of the "black box" as an inevitable property of AI. In reality, this is a tradeoff between performance and interpretability that is reconsidered depending on the application domain.

Users and regulators don't require complete understanding of a model's mathematics, but sufficient explanation to assess the reliability and fairness of decisions in a specific context.

| Explainability Level | Required Detail | Typical Domains |

|---|---|---|

| Minimal | General description of logic | Content recommendations |

| Medium | Key decision factors | Credit scoring, hiring |

| Maximum | Complete computational trace | Medicine, justice |

Development of tools for algorithm auditing and bias testing is becoming a priority. MIT creates open datasets and benchmarks for fairness evaluation, standardizing methods for verifying and comparing approaches to minimizing discrimination.

Technical solutions cannot be "neutral" or "objective" by default. Fairness requires active design at all stages of development.

Interdisciplinary Approaches to Understanding Sociotechnical Systems

Academic institutions are redefining AI as a sociotechnical system, where technology is inseparably linked to social practices, institutional structures, and cultural contexts.

- Knowledge integration: computer science, sociology, ethics, law, and economics work as a unified front, not in parallel.

- Rejection of technological determinism: technologies don't develop independently of social choices — every architect's decision is a political act.

- Multiple trajectories: the future of AI is not predetermined. Policy decisions today shape possible development paths tomorrow.

Open collaboration between academia, industry, and civil society creates feedback mechanisms that adjust research directions according to real needs.

MIT actively democratizes access to AI through educational programs, open publications, and open-source tools. This counters the myth of AI as an exclusive domain of a narrow circle of experts.

Broad participation of diverse stakeholders in shaping the future of technologies is not an ideal, but a practical necessity for adequate system development.

Building Critical Thinking About Technology — Practical Tools for Navigating the Information Landscape

Methods for Verifying Information and Evaluating AI Sources

Critical evaluation of AI information begins with distinguishing between sources: peer-reviewed academic publications, corporate technical reports, journalistic materials, and speculative forecasts have different degrees of reliability and different communication objectives.

Fact-checking requires four steps: identifying primary data sources, evaluating research methodology, analyzing authors' conflicts of interest, and comparing claims with expert community consensus.

- Find the original research, not a retelling in popular media

- Check who funded the work and what interests the authors have

- Compare conclusions with independent sources and other studies

- Distinguish core findings from speculation in headlines

The absence of primary academic sources in popular AI discussions creates a favorable environment for myth propagation. Active search for original research and technical documentation is a necessary condition for verifying claims.

Red Flags of Unreliable Information

Key indicators of problematic AI content:

| Indicator | What It Means |

|---|---|

| Absolute claims without limitations | "AI can do everything" instead of "AI excels at X but struggles with Y" |

| Anthropomorphization of algorithms | Attributing consciousness, desires, intentions to systems |

| Lack of technical details with bold claims | Grand promises without explaining how it works |

| Ignoring context and conditions | Lab results ≠ real-world results |

Reliable sources provide balanced information about achievements and limitations, include comments from independent experts, and reference original publications.

Developing critical reading skills requires understanding basic machine learning concepts, which enables distinguishing real breakthroughs from marketing exaggerations.

Educational Initiatives and Media Literacy Development

Academic institutions are developing media literacy programs adapted for understanding AI: basic machine learning concepts, algorithmic principles, methods for critically evaluating technological claims.

Open online courses make AI knowledge accessible to broad audiences, contributing to informed public opinion formation and reducing the influence of myths.

Effective educational programs focus not on technical implementation details but on developing conceptual understanding of technology capabilities and limitations — this enables people without specialized education to participate in discussions about AI's social consequences.

Long-term strategy requires integrating fundamentals of computer literacy and critical thinking into basic education from the school level. This shapes a generation of citizens capable of informed participation in technological debates.

Interdisciplinary approaches emphasize connections between technology and social sciences, ethics, and law. They debunk the myth of technologies as neutral tools and demonstrate their embeddedness in social relations and values.

Successful initiatives create communities of practice where participants exchange experiences in critical information analysis, collectively verify facts, and support each other in navigating the complex information landscape of modern technology.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

An AI myth is a widespread misconception about the capabilities or threats of artificial intelligence that doesn't align with facts. Unlike reality, myths often exaggerate AI's capacity for self-awareness or underestimate its limitations. The mechanisms behind such myths mirror historical misconceptions—from Viking horned helmets to Nero's fire (S1, S2).

No, modern AI lacks consciousness and doesn't create in the human sense. Generative models analyze patterns in data and produce new combinations, but without understanding meaning. MIT research shows that even advanced systems operate on statistical regularities rather than creative thinking (S3, S5).

This is one of the major myths with no scientific basis. Modern AI is a narrow-purpose tool without autonomous goals or self-awareness. Real risks stem from human misuse of technology, not machine uprising (S4).

Consult academic sources like MIT News and peer-reviewed research. Check whether claims are confirmed by independent experts and include technical implementation details. Critically evaluate sensational headlines and demand evidence for specific capabilities (S5, S6).

The Viking horned helmet myth parallels AI anthropomorphization—attributing human qualities to machines. The story of Nero and Rome's fire mirrors fears of autonomous systems without real foundation. Both cases demonstrate how persistent misconceptions form easily (S2).

MIT conducts interdisciplinary research on AI system transparency and explainability. The university publishes findings in open access and develops educational programs to foster critical thinking. Special emphasis is placed on creating methods to verify technology capability claims (S5, S6).

Mechanisms behind technology myths mirror ancient ones: knowledge gaps, fear of the unknown, and oversimplification of complex phenomena. Media often amplify misconceptions for sensationalism. Evolutionarily, humans tend to see patterns and intentions even where none exist (S1, S6).

Primary risks include algorithmic bias, privacy violations, and job displacement in certain sectors. Deepfakes and information manipulation also pose problems. These threats require regulation but aren't connected to apocalyptic fantasy scenarios (S4).

Quality articles contain research citations, independent expert opinions, and technical details. Myth-making uses emotional headlines, generalizations, and lacks specifics. Verify the author's credentials and whether the material underwent peer review (S6).

No, AI complements but doesn't replace the creative process. Generative models produce content based on existing data but lack original vision or cultural context. Research shows human-machine collaboration is most effective (S3, S5).

The myth emerged from science fiction and marketing exaggerations by companies. Anthropomorphization is a natural human tendency to attribute consciousness to complex systems. Media amplify the misconception by using terms like "thinks" and "understands" when referring to algorithms (S1, S2).

MIT and other universities are launching open courses on machine learning fundamentals and AI ethics. Media literacy programs teach critical evaluation of technological claims. Science communication projects explaining algorithmic principles in accessible language are also important (S6).

This is a myth—AI surpasses humans only in narrow tasks like playing chess or pattern recognition. In general intelligence, adaptability, and contextual understanding, machines fall significantly short. The limits of machine learning are tied to dependence on data quality and inability for abstract reasoning (S3).

Media often focus on hypothetical scenarios like the "trolley problem," ignoring real issues of bias and transparency. Exaggeration creates the impression of unsolvable contradictions, though many questions are addressable through regulation. Academic research offers practical approaches to AI ethics (S4, S5).

Open AI refers to models and tools with public source code, available for study and modification. This matters for transparency, safety verification, and democratization of technology. MIT actively supports open-source approaches to prevent monopolization and debunk myths through knowledge accessibility (S5).

Yes, myths distort perception of real risks and technological possibilities. Exaggerating threats slows beneficial innovation, while underestimating problems leads to regulatory negligence. Developing critical thinking through education is key to safe AI development (S4, S6).