Physiognomy and AI: What Users Are Actually Searching For and Why This Query Signals a Critical Problem in the Academic Ecosystem

The search query "pdf physiognomy in the age of ai researchgate" reflects an attempt to find academic work linking the ancient practice of physiognomy with modern artificial intelligence technologies. Physiognomy is a discredited belief system claiming that a person's facial features can reveal their character, intelligence, criminal tendencies, or moral qualities. More details in the Deepfake Detection section.

Historically, this practice was used to justify racial segregation, eugenics, and discrimination (S007). Today it's returning not as overt manifestos, but disguised as algorithmic objectivity.

⚠️ What Modern "Digital Physiognomy" Looks Like in the AI Context

In the machine learning era, physiognomy has gained new life through facial recognition algorithms that supposedly can determine personality characteristics, sexual orientation, political views, or criminal propensity based on photo analysis (S001).

These systems masquerade as "objective data analysis," using neural network terminology and statistical significance, but essentially reproduce the same discredited assumptions about the connection between appearance and internal qualities. Biometric recognition becomes a tool through which old prejudices gain new legitimate status.

- Digital Physiognomy

- The use of computer vision algorithms to infer personality or behavioral characteristics based on facial analysis. Dangerous because the technological veneer conceals the absence of causal relationships between appearance and internal qualities.

- ResearchGate as a Distribution Vector

- The platform creates an appearance of academic legitimacy for work that hasn't undergone rigorous peer review. A preprint uploaded there gains an aura of scientific credibility simply by being placed among millions of users.

🔎 Why ResearchGate Becomes a Channel for Spreading Pseudoscientific Practices

ResearchGate positions itself as a platform for sharing scientific work, but the absence of strict pre-publication peer review turns it into a dumping ground for unverified materials (S003). Any researcher can upload a preprint that will gain the appearance of academic legitimacy simply by being hosted on the platform.

This creates an illusion of scientific consensus around ideas that haven't undergone critical review. Readers see: many citations, high author h-index, attractive graphs — and assume the work passed a quality filter. In reality, there is no filter.

🧩 The Substitution Mechanism: How Pseudoscience Masquerades as AI Research

The key tactic is using technical jargon and data visualizations to create an impression of scientific rigor. A paper may contain classification accuracy graphs, descriptions of neural network architectures, references to datasets — while being based on fundamentally flawed assumptions about causal relationships (S006).

| Sign of Legitimate Research | Sign of Masquerade in AI-Physiognomy |

|---|---|

| Clearly formulated hypothesis about the mechanism of connection | Assumes the algorithm will "find" the connection itself, without theoretical justification |

| Confounders controlled (age, lighting, camera angle) | Uses "raw" dataset with multiple uncontrolled variables |

| Results reproducible on independent samples | Testing only on one dataset or on a subsample of the same dataset |

| Limitations and alternative explanations discussed | Results presented as definitive proof |

The problem is compounded by the fact that generative AI systems can create plausible-looking research texts without a real empirical basis. An article can be written convincingly, contain references to real work (often distorting their meaning), and look like the result of serious research.

This isn't just a question of publication quality. AI ethics and safety directly depend on what assumptions are embedded in algorithms. If a system is trained on work reproducing historical prejudices, it will scale them.

Steelman Analysis: Seven Arguments Used to Justify "Scientific Physiognomy" in the AI Era — and Why They Seem Convincing

To understand why pseudoscientific work on physiognomy finds an audience in academic circles, we need to examine the strongest versions of proponents' arguments. This doesn't mean agreeing with these positions, but it allows us to identify the mechanism of persuasion and weak points in the logic. More details in the AI Myths section.

- "Correlations exist objectively — we're just measuring them". If an algorithm detects statistical correlations between facial features and characteristics in a dataset, this is supposedly an objective fact. The model "simply finds patterns" without bias. An appeal to positivism: if a correlation is reproducible, it deserves study.

- "Modern datasets and computational power make the analysis fundamentally different". Instead of subjective assessments by a few observers — millions of images and deep neural networks revealing subtle patterns. The scale of data supposedly overcomes past limitations.

- "Genetics and embryonic development link face and brain". The face and brain form from the same embryonic tissues, subject to the same genetic factors. Therefore, correlations between morphology and neurocognitive characteristics are possible. Sounds scientific and harder to refute without specialized knowledge.

- "Practical applications justify the research". Potential applications: improved security, early diagnosis of disorders, personalized education. A utilitarian argument: if technology can save lives, ethical objections are secondary.

- "Criticism is based on political correctness, not science". Opponents are motivated by ideology, afraid of "inconvenient truths" about biological differences. This frame turns critics into opponents of scientific progress.

- "People already use physiognomy intuitively — AI just formalizes it". People constantly judge others by appearance. If these judgments contain predictive power, algorithmization can make the process fairer by eliminating individual biases. A paradox: AI physiognomy positions itself as fighting discrimination.

- "Banning research won't stop development — better to regulate openly". Technology will develop in closed labs. Public research allows society to understand risks and form regulatory frameworks. A ban drives the problem underground.

Each of these arguments contains seeds of logic that make them convincing to people unfamiliar with the history of physiognomy or machine learning methodology. That's precisely why they work in academic environments.

The first four arguments appeal to technological optimism and positivist philosophy of science: if we can measure it, it must be real. The last three use social rhetoric — accusing critics of ideology, appealing to practical benefits, and pragmatism.

The problem is that these arguments ignore the fundamental difference between correlation in a dataset and causal relationship in reality. A dataset is not nature, but a social artifact reflecting historical biases, data collection methods, and variable selection. An algorithm doesn't "discover truth" — it reproduces the structure of the data it was trained on.

The connection between AI physiognomy and the return of phrenology shows that data scale and computational power don't solve methodological problems — they exacerbate them, giving false scientific credibility to results. The genetic argument sounds convincing but ignores that population-level correlation doesn't predict individual characteristics, and social factors (nutrition, stress, lifestyle) affect both face and behavior independently of each other.

The utilitarian argument about practical benefits contains a hidden premise: that the technology actually works. But if the foundation is unreliable, application amplifies harm. Accusing critics of political correctness is a classic technique that shifts discussion from methodology to ideology, avoiding specific scientific objections.

The argument about "formalizing intuition" is paradoxical: if people already discriminate based on appearance, algorithmization doesn't eliminate discrimination but scales it, giving it an appearance of objectivity. This amplifies harm rather than reducing it.

The last argument about open regulation makes sense, but only if research is methodologically sound. If it's not, publicity doesn't save it — it legitimizes pseudoscience. Regulation must start with the criterion: does this actually work? — not with the assumption that it works and attempts to control it.

Understanding these arguments is important not for refuting them (that's the task of following sections), but for diagnosis: why intelligent people accept them. The answer lies in cognitive traps these arguments exploit — and in how AI ethics and safety require critical analysis, not blind trust in technology.

Evidence Base: What the Data Actually Says About the Link Between Appearance and Personality Traits — and Why Most "AI Physiognomy" Studies Are Methodologically Unsound

Critical analysis of the empirical evidence shows that the vast majority of studies claiming AI can determine personality traits from faces suffer from fundamental methodological problems that invalidate their conclusions. For more details, see the section on AI Errors and Biases.

🧪 Problem 1: Confusing Correlation with Causation in the Presence of Multiple Confounders

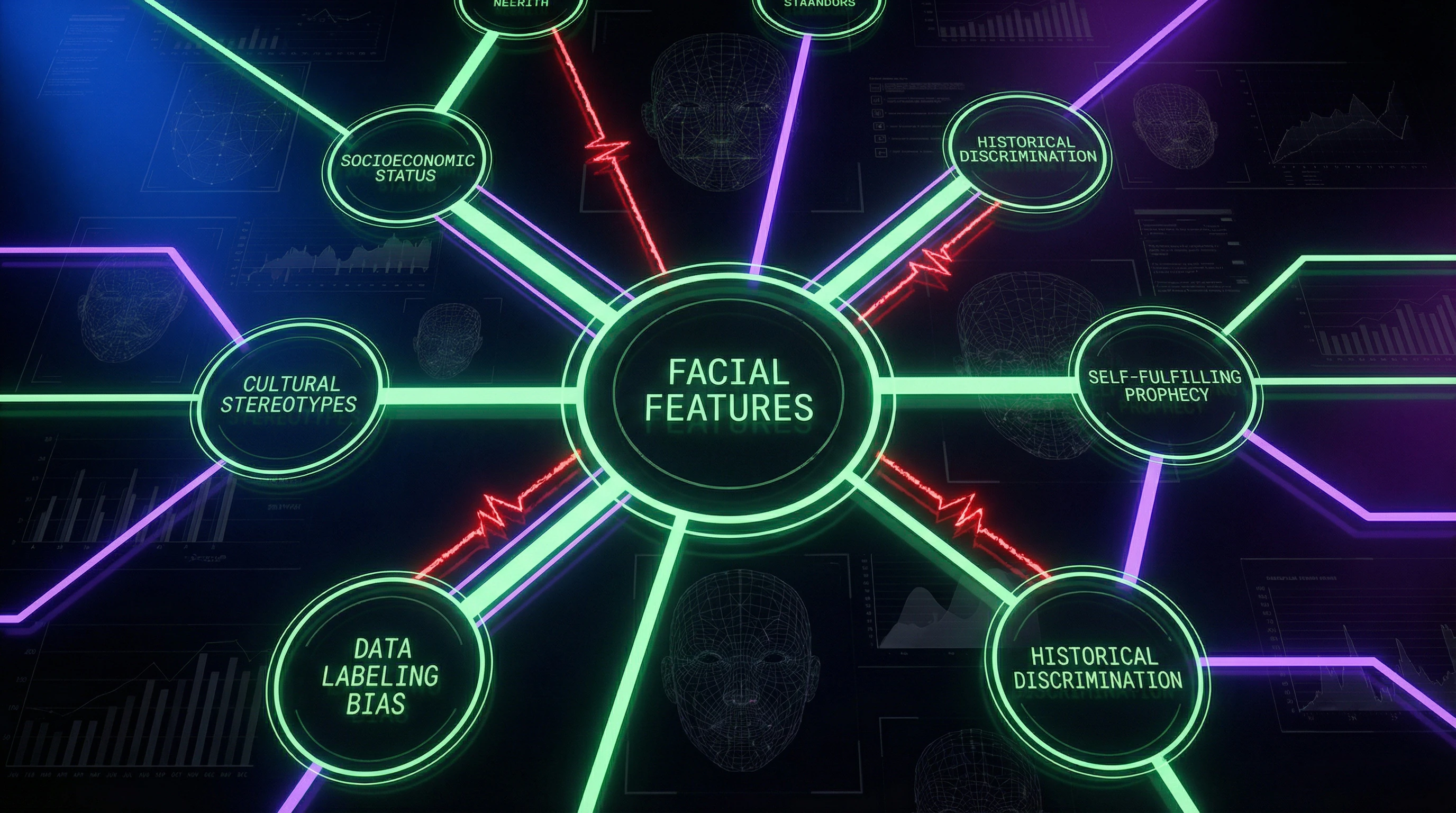

Even if an algorithm detects a statistical association between facial features and a particular characteristic in a dataset, this does not establish a causal relationship. Faces may correlate with socioeconomic status (through access to cosmetic procedures, nutrition, healthcare), which in turn correlates with education, opportunities, and subsequently with the measured characteristics.

The model "predicts" not internal qualities, but social context (S001).

📊 Problem 2: Datasets Reflect Existing Biases, Not Objective Reality

Machine learning systems are trained on data created by humans with biases. If in the training dataset people with certain facial features are more often labeled as "criminal" due to police or judicial bias, the model will learn to reproduce this bias rather than discover a genuine relationship.

This is not "objective measurement," but automated discrimination (S004).

For more on the mechanisms of such bias, see the analysis of biometric facial recognition.

🧬 Problem 3: Biological Arguments Are Not Supported by Genetic Research

While the face and brain do develop from related embryonic structures, genetic studies find no significant correlations between genes affecting facial morphology and genes associated with cognitive or personality traits. Shared developmental factors do not imply functional connections in the mature organism.

The embryological argument is a biologized version of a logical fallacy (S001).

⚠️ Problem 4: Self-Fulfilling Prophecy Effects Distort All Measurements

If people with certain facial features are systematically treated differently due to existing stereotypes (for example, perceived as less competent or more aggressive), this affects their life trajectories, opportunities, and psychological state. Any detected correlation may be the result of social influence rather than an innate connection.

Separating these effects in observational data is impossible.

🔎 Problem 5: Publication Bias and P-Hacking in AI Research

Studies that find no relationship between facial features and personality traits are published less frequently than studies with "positive" results. This creates a distorted picture in the literature. Moreover, with large datasets and numerous possible features, it's easy to find statistically significant correlations by chance.

Without pre-registration of hypotheses and rigorous correction for multiple comparisons, most "discoveries" are false positives (S002).

🧾 Problem 6: Lack of Reproducibility in Independent Samples

The critical test of any scientific claim is reproducibility on independent data. Most "AI physiognomy" studies fail this test: models trained on one dataset show sharp drops in accuracy on other samples, especially from different cultural contexts.

This indicates that models are learning specific artifacts of particular datasets rather than universal patterns (S001).

📌 What Systematic Reviews and Meta-Analyses Show

Systematic reviews of the literature on the relationship between facial characteristics and personality traits show that after controlling for methodological problems, effects either disappear or become so small as to have no practical significance. Effect sizes typically explain less than 5% of variation, making individual predictions meaningless even when group differences are statistically significant (S001).

The context of this problem is broader: see how modern algorithms repeat the mistakes of the 19th century and the principles of responsible AI development.

The Mechanism of Delusion: Why Intelligent People Believe in "Scientific Physiognomy" — Cognitive Traps and Exploitation of Trust in Technology

Understanding the psychological mechanisms that make pseudoscientific claims about physiognomy convincing is critically important for developing effective counter-strategies. Learn more in the Statistics and Probability Theory section.

🧩 Trap 1: Representativeness Heuristic and Illusion of Validity

People tend to trust their intuitive judgments about others based on appearance because these judgments feel fast and confident. When technology supposedly confirms these intuitions, an illusion of validity emerges: "I always felt this was true, and now science has proven it."

In reality, both intuition and algorithm may reproduce the same cultural stereotypes without any real predictive power.

⚙️ Trap 2: Technological Determinism and Belief in "Machine Objectivity"

There's a widespread misconception that computers and algorithms are free from human biases because they "just process numbers." This ignores the fact that algorithms are created by humans, trained on data collected and labeled by humans, and optimized for goals defined by humans (S008).

Every stage of algorithm development — from data selection to defining success metrics — is infused with human values and biases. "Machine objectivity" is a myth that masks developer accountability.

🔁 Trap 3: Substituting Explanation with Description Through Mathematical Formalization

When correlation is expressed through equations, graphs, and statistical metrics, it acquires the appearance of explanation. "The model achieved 73% accuracy in predicting X from facial features" sounds like a scientific discovery (S002).

In reality, this is merely a description of patterns in a specific dataset without understanding causal mechanisms. Mathematical form creates an illusion of deep understanding.

- Correlation in data ≠ causal relationship

- High accuracy on training set ≠ validity on new data

- Statistical pattern ≠ biological mechanism

🧬 Trap 4: Biologization of Social Phenomena as Defense Against Cognitive Dissonance

Acknowledging that social inequalities result from historical and structural factors requires accepting collective responsibility and the need for systemic change. Biological explanations (including physiognomy) remove this responsibility: "It's just nature, there's nothing we can do" (S001).

This is psychologically more comfortable, especially for those who benefit from the existing order. Biologism becomes a tool for defending against cognitive dissonance, not a result of scientific analysis.

Learn more about how AI physiognomy repeats the mistakes of the 19th century, and why AI ethics requires a critical approach to such systems.

Conflicts in Sources and Zones of Uncertainty: Where Even Physiognomy Critics Disagree — and What This Means for Practice

Even among researchers criticizing physiognomic AI systems, there are disagreements on key issues. This isn't a weakness of the critique — it's a sign that the problem is more complex than it appears at first glance. More details in the section Cognitive Biases.

🔬 Disagreement 1: Do Any Valid Correlations Between Face and Personality Exist at All

One position: any correlations are artifacts of methodological problems and social confounders. The second: very weak but real connections are possible through hormonal influence on development or self-presentation effects (people with certain traits develop certain interaction styles in response to others' reactions).

This debate defines the boundaries of permissible research (S001). If correlations exist, even weak ones, the question becomes not "whether to study" but "how to study without harm."

📊 Disagreement 2: Is the Problem in the Idea Itself or in Current Implementation

First position: physiognomy is fundamentally flawed, methodological improvements won't save it. Second: current systems are poor due to data and model deficiencies, but theoretically more sophisticated approaches are possible.

This disagreement directly affects regulatory strategy: complete ban or strict methodological standards (S004). The choice between them is not technical but political.

🧾 Disagreement 3: The Role of Academic Platforms in Spreading Problematic Research

One side: platforms like ResearchGate should implement strict moderation and remove pseudoscientific content. The other fears this would create censorship mechanisms that could be used against legitimate but controversial research.

The balance between openness and quality remains an unsolved problem (S003). There's no universal algorithm that can distinguish "inconvenient truth" from "convenient lie."

⚙️ Uncertainty: How to Assess Risks with Rapidly Developing Technologies

Current physiognomic systems are ineffective. But it's unclear whether this will remain true with the emergence of fundamentally new approaches — integration of genetic data, longitudinal studies, neuroimaging.

The precautionary principle suggests restrictions even under uncertainty. But this conflicts with the principle of research freedom (S008).

- What This Means for Practice

- A physiognomy critic cannot simply say "this is false." They need to specify which mechanisms are flawed, under what conditions they might be valid, and why current evidence is insufficient. This requires more work, but it's the only way to be convincing.

- Why Disagreements Don't Weaken Critics' Position

- The presence of debates within the critical community shows it's not ideological. Ideologues don't argue — they declare. Researchers argue because they seek truth, not victory.

For journalists, regulators, and researchers, this means: demand not agreement, but transparency. What specific assumptions does the author make? Where might they be wrong? What data could refute them?

Verification Protocol: Seven Questions That Will Expose Pseudoscientific "AI Physiognomy" Work in Three Minutes — A Checklist for Researchers, Journalists, and Regulators

A practical tool for rapid assessment of scientific validity in works claiming AI can determine personality characteristics from appearance. Learn more in the Epistemology Basics section.

✅ Question 1: Has the work undergone independent peer review in a journal with an impact factor above 3.0?

Preprints on ResearchGate or arXiv do not undergo rigorous review. Publication in a peer-reviewed journal doesn't guarantee quality, but its absence is a red flag.

Check the journal in Scopus or Web of Science databases. If the work exists only as a preprint for more than a year, that's suspicious (S003).

✅ Question 2: Were the hypothesis and analysis plan registered before data collection?

Preregistration on platforms like Open Science Framework protects against p-hacking and HARKing (hypothesizing after results are known).

If authors cannot provide a link to preregistration, results may be the product of data fitting (S002).

✅ Question 3: Has the model been tested on an independent sample from a different cultural context?

Validation only on a portion of the same dataset (train-test split) is insufficient. Testing on completely independent data collected in another country, at another time, by other researchers is required.

Without such validation, results may be specific to the particular dataset (S001).

⛔ Question 4: Are obvious confounders (age, gender, race, SES) controlled for?

If a model "predicts" a characteristic but authors haven't shown that the prediction holds after controlling for sociodemographic variables, the result may be entirely explained by these confounders.

Demand tables with multivariate analysis results (S001).

⛔ Question 5: Are data and code disclosed for independent verification?

Reproducibility is the foundation of science. If authors don't provide access to data (or at least synthetic data with the same properties) and analysis code, results cannot be verified.

References to "confidentiality" or "trade secrets" in academic work are unacceptable (S002).

⛔ Question 6: Are ethical risks and potential for misuse discussed?

Legitimate work in a sensitive area must include detailed discussion of potential risks: how results could be used for discrimination, profiling, privacy violations.

Absence of such discussion indicates that authors either don't recognize the consequences or are deliberately concealing them. Familiarize yourself with principles of responsible AI development.

⛔ Question 7: Does it use language characteristic of pseudoscience?

Red flags in text: absolute claims without qualifications ("AI accurately identifies criminals by face"), appeals to authority without evidence, absence of limitations discussion, accusations that critics are "biased" or practicing "political correctness".

- Scientific language

- "The model showed a correlation of r = 0.32 (p < 0.05) when controlling for age and gender, but the effect may be explained by cultural differences in emotional expression".

- Pseudoscientific language

- "Our AI revealed a hidden connection between facial features and personality that scientists have ignored out of political correctness".

If a work contains 4+ red flags from this list, it doesn't deserve trust. If it contains 2–3, critical reading and consultation with a methodologist are required. If red flags are absent, the work may be legitimate — but this doesn't guarantee its correctness.

Remember: absence of evidence of harm is not evidence of absence of harm. The burden of proof lies with authors claiming AI can determine personality characteristics from appearance. Review the analysis of physiognomic AI and threats to civil liberties.