Physiognomic AI: When Algorithms Judge People by Skulls and Noses — Defining the Phenomenon Tech Giants Prefer to Ignore

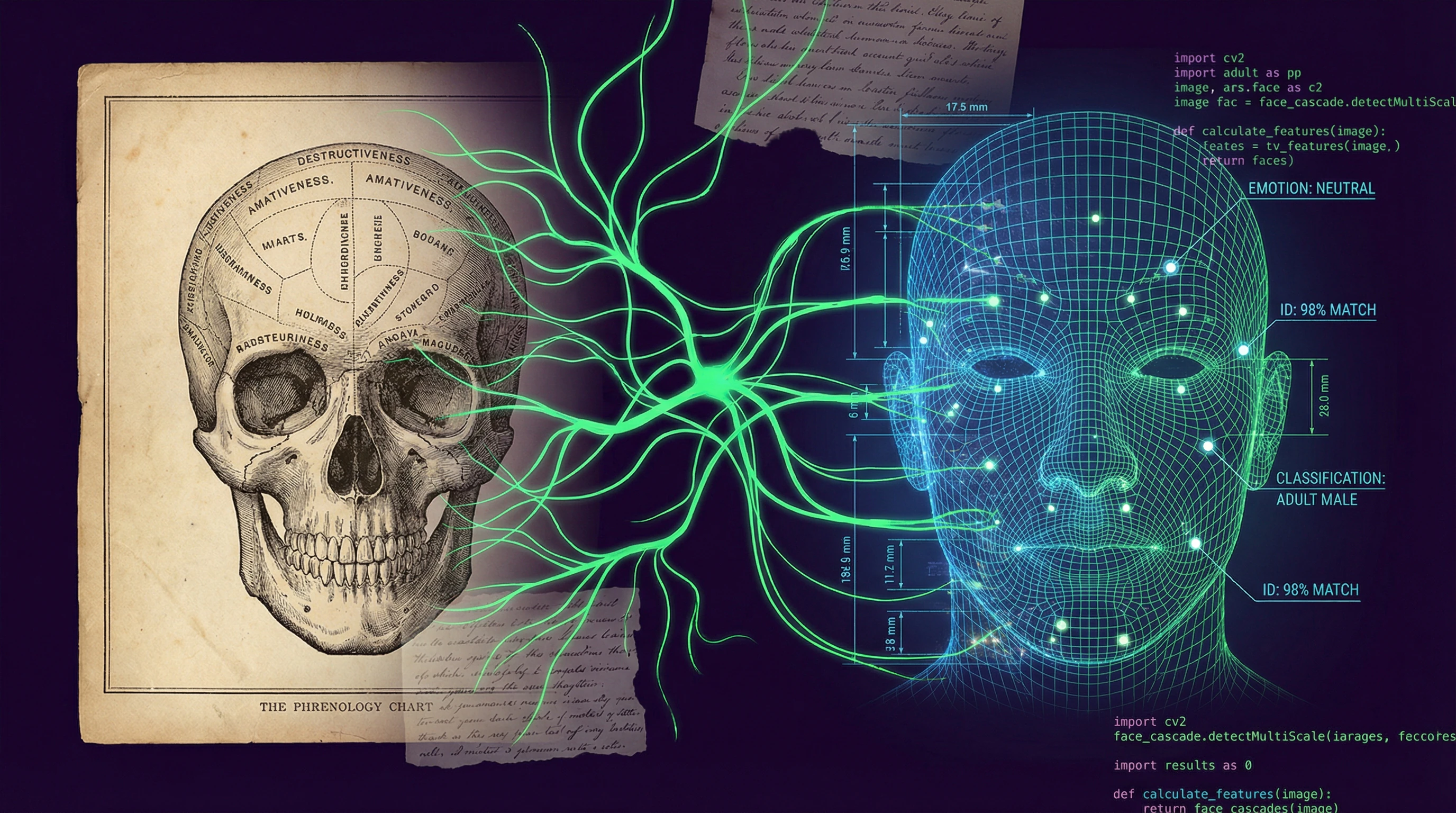

Physiognomic artificial intelligence is the practice of using computer vision to infer hierarchies of bodily composition, protected class status, perceived character, capabilities, and future social outcomes based on physical or behavioral characteristics (S003, S004). The spectrum encompasses facial emotion recognition systems, algorithms predicting criminal tendencies from skull shape, and assessments of human "trustworthiness" based on distance between eyes.

This isn't a hypothesis about the future. Such systems are already deployed in law enforcement, credit institutions, and hiring systems (S008). Tech giants remain silent because acknowledging this phenomenon requires admission: they've resurrected 19th-century physiognomy and given it GPUs.

Three Mechanisms of Physiognomic AI

- Classification

- Dividing people by physical features: skin color, face shape, bone structure, distance between eyes. The algorithm extracts these features from images automatically.

- Hierarchization

- Assigning group characteristics based on classification: certain groups are declared more trustworthy, intelligent, or law-abiding than others. This isn't description, it's ranking.

- Prediction

- Claiming the algorithm can determine future behavior: whether a person will become a criminal, successful employee, or loyal citizen (S003). This is the step from classification to destiny.

Why Computer Vision Is the Perfect Carrier for 21st-Century Physiognomy

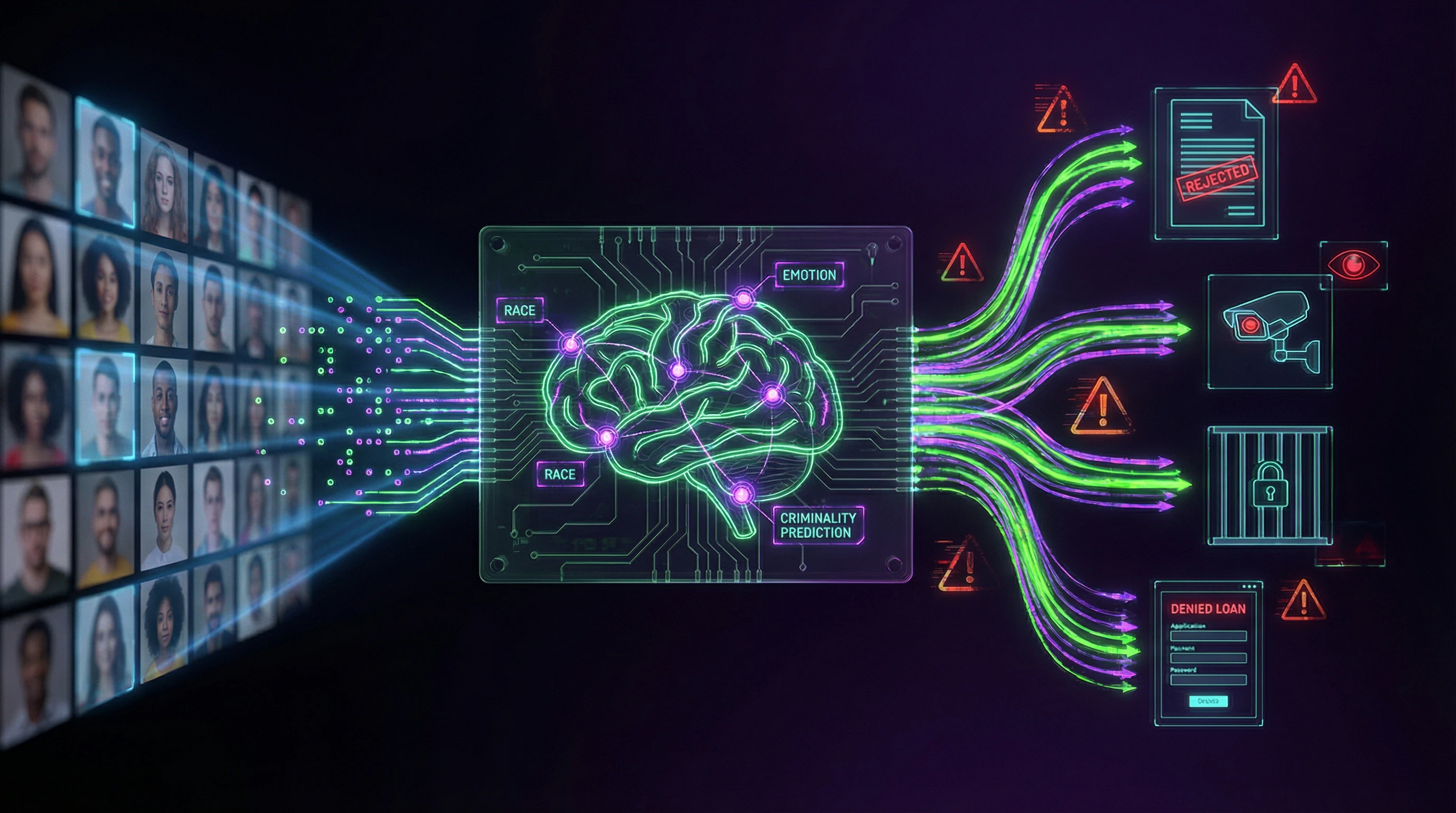

Computer vision serves as the central vector for physiognomic AI (S003, S004). Systems train on massive datasets of labeled faces, where each image is linked to tags — gender, age, race, emotion.

The algorithm learns to find correlations between pixels and social categories, without understanding that these categories are social constructs, not biological facts. The result: the machine reproduces and amplifies human prejudices, giving them the appearance of objectivity.

The Boundary Between Biometrics and Physiognomy

| Biometric Identification | Physiognomic Inference |

|---|---|

| Matches a face against a database to establish identity | Claims it can infer internal qualities from appearance |

| Technically feasible and sometimes justified | Scientifically invalid and ethically impermissible |

| Answer: "This is person X" | Answer: "This person is a criminal / untrustworthy / dangerous" |

The boundary is blurred: many systems begin with identification but then add layers of inference about character, intentions, or future behavior (S003). This transforms a control tool into a condemnation tool.

For more on the mechanisms of this transformation, see the article "AI Physiognomy and the Return of Phrenology".

Five Arguments Defending Physiognomic AI — and Why They Seem Convincing to Those Unfamiliar with the History of Science

Before dismantling physiognomic AI with evidence, we must understand why this idea seems appealing. Steelmanning — presenting the strongest version of an opponent's argument — reveals that defenders of physiognomic AI rely on five seemingly logical theses. For more details, see the Synthetic Media section.

🔬 First Argument: Correlations Exist, and Algorithms Find Them

Defenders claim: if an algorithm detects a statistical relationship between facial structure and certain behavior, then that relationship is real. The machine isn't biased — it simply analyzes data.

If a model predicts criminal behavior with 70% accuracy, that's better than random guessing. The problem is that correlation doesn't equal causation, and 30% false accusations aren't a statistical margin of error — they're real lives.

📊 Second Argument: Emotions Are Reflected in Faces — This Is a Proven Psychological Fact

Proponents cite Paul Ekman's research on universal facial expressions. If anger, fear, and joy have recognizable facial patterns, why not train an algorithm to recognize them?

However, critics point out: Ekman's theory oversimplifies the cultural diversity of emotional expressions and ignores context. Facial expression isn't a window into the soul, but a social signal dependent on culture, situation, and who's watching.

🧬 Third Argument: Genetics Influences Both Appearance and Behavior — Why Can't AI Capture This Connection

Genetic factors do indeed influence both physical traits and some behavioral tendencies. If certain genetic markers correlate with facial structure and with predisposition to impulsivity, an algorithm could theoretically detect this connection.

It sounds scientific — but ignores that behavior is determined by complex interactions of genes, environment, and culture, not nose shape. This is reductionism disguised as statistics.

⚙️ Fourth Argument: Technology Is Neutral — The Problem Is in the Data, Not the Method

This is the most dangerous argument: computer vision itself isn't racist, it's just been trained on biased data. Fix the dataset — and the problem is solved.

- Physiognomic logics are embedded in the technical mechanism of computer vision applied to humans (S003, S004)

- The problem isn't the data — the problem is the very idea that appearance can predict internal qualities

- Even a perfect dataset doesn't save us from conceptual error

🛡️ Fifth Argument: Rejecting the Technology Means Rejecting Safety and Efficiency

The final argument appeals to fear: if we ban physiognomic AI, we won't be able to prevent crimes, identify terrorists, or optimize hiring.

This is a false dilemma. Alternative risk assessment methods exist, based on behavior rather than appearance. But fear is a powerful motivator, and this argument works on politicians and corporate executives.

For in-depth analysis of the mechanisms by which these arguments become convincing, see the analysis of phrenology's return in the AI era and protocols for debunking pseudoscientific claims.

Evidence Base Against Physiognomic AI: Why Reviving Physiognomy and Phrenology Is Not Progress, But Medieval Regression with GPUs

The revival of the pseudosciences of physiognomy and phrenology through computer vision and machine learning is a matter of urgent concern (S003, S004). Historical analysis shows: physiognomy and phrenology justified slavery, colonialism, forced sterilization, and genocide.

The resurrection of these theories through AI creates the risk of repeating these horrors in the digital age—but with a scale and speed that the 19th century could not have imagined.

📊 Historical Context: How Physiognomy Justified Genocide

In the 19th century, physiognomy and phrenology were mainstream science. Cesare Lombroso claimed that criminals could be identified by skull shape. Phrenologists measured heads to prove European superiority over Africans and Asians. More details in the Deepfakes section.

These theories were used to justify slavery in the United States, colonialism in Africa and Asia, eugenics programs in Europe and America. The Nazis applied physiognomic measurements to classify races and send people to concentration camps. By the mid-20th century, the scientific community rejected these theories as pseudoscience—but algorithms are resurrecting them.

🧪 Contemporary Research: Why Emotion Recognition Algorithms Don't Work

Numerous studies demonstrate the failure of physiognomic AI. Emotion recognition systems show accuracy no better than random chance in real-world conditions, especially for people of non-European descent.

Algorithms predicting criminality from faces reproduce racial prejudices: they more frequently classify Black people as potential criminals, regardless of actual behavior. This is not a calibration error—it's a fundamental problem with the method (S003).

- Emotion recognition systems: 50–60% accuracy on underrepresented groups (women, elderly people, non-white races)

- Criminality algorithms: systematic overestimation of risks for African Americans

- Biometric systems: denial of access for people with neurological differences

🧾 Three Scandals That Exposed the Danger of Physiognomic AI

HireVue: a facial analysis system for evaluating job candidates was accused of discrimination. The algorithm assessed not competencies, but facial expressions and voice tone, which disadvantaged people with autism, neurological differences, and representatives of non-Western cultures.

Uyghur Recognition: China's system for recognizing Uyghurs by face was used for mass detentions and sending people to re-education camps.

COMPAS: an algorithm for predicting recidivism in the United States systematically overestimated risks for African Americans, leading to harsher sentences (S003).

🔎 Meta-Analysis: What Systematic Reviews Say About Accuracy

Systematic literature reviews show: physiognomic AI does not achieve claimed accuracy in independent tests. Studies funded by developers demonstrate 80–90% accuracy, but independent verification reduces this figure to 50–60%—the level of random guessing.

| Testing Condition | Claimed Accuracy | Independent Verification | Conclusion |

|---|---|---|---|

| Controlled laboratory | 80–90% | 70–75% | Overestimation under ideal conditions |

| Real-world conditions | — | 50–60% | Random guessing level |

| Underrepresented groups | — | 30–45% | Tool of discrimination |

Accuracy drops sharply for underrepresented groups: women, elderly people, non-white races. This means the systems function as tools of discrimination, not objective assessment (S003, S004).

For more details on the mechanisms of these errors, see the analysis of the return of phrenology in modern algorithms and principles of responsible AI development.

Error Mechanisms: Why Physiognomic AI Confuses Correlation with Causation and Creates Self-Fulfilling Prophecies

Physiognomic AI makes a fundamental epistemological error: it mistakes correlation for causation. If an algorithm detects that people with a certain facial shape are arrested more frequently, it concludes that facial shape causes criminal behavior. Learn more in the Deepfake Detection section.

In reality, the cause is systemic racism: police stop and arrest people of certain appearances more frequently, creating a biased dataset (S003).

The algorithm sees a connection between face and arrest, but doesn't see poverty, police surveillance, and structural inequality as common causes. The system punishes people for their social position, masking this as objective risk assessment.

🧬 Confounders: Hidden Variables That Algorithms Don't See

A confounder is a hidden variable that simultaneously affects both appearance and behavior. Poverty affects nutrition (reflected in the face) and arrest probability (due to police surveillance in low-income neighborhoods).

Result: the algorithm creates an illusion of causal connection between facial features and criminality, when both variables depend on a third factor—socioeconomic status.

🔁 Feedback Loops: How Physiognomic AI Creates the Reality It Predicts

Self-fulfilling prophecy is a key harm mechanism. If an algorithm predicts criminality based on appearance, police intensify surveillance of such individuals.

- Intensified surveillance leads to more arrests

- More arrests confirm the algorithm's prediction

- The system creates evidence of its own correctness

- This evidence is an artifact of discrimination, not objective reality (S003, S004)

🧷 Reductionism: Why Complex Behavior Cannot Be Reduced to Pixels

Human behavior is determined by the interaction of genetics, epigenetics, neurobiology, psychology, social environment, culture, economics, and chance. Physiognomic AI reduces this complexity to pixel patterns.

This isn't simplification—it's a category error, comparable to trying to predict weather by sky color. Some information is contained in appearance, but it's insufficient for reliable predictions about character or future behavior (S003).

For more on how AI reproduces historical scientific errors, see AI Physiognomy and the Return of Phrenology.

Conflicts in Sources: Where Researchers Disagree and Why It Matters for Understanding the Problem

The scientific community is divided on physiognomic AI. Three main positions—abolitionism, reformism, and techno-optimism—reflect a fundamental split in understanding whether the technology can be fixed or must be banned. More details in the Cognitive Biases section.

These disagreements are not academic. They determine which laws will be passed, which systems deployed, whose rights protected.

🧩 Abolitionists vs. Reformists: Can Physiognomic AI Be Fixed or Must It Be Banned

Abolitionists, including the authors of the Fordham Law study, argue that physiognomic logics are embedded in the technical mechanism of computer vision applied to humans (S003, S004). The problem cannot be solved by improving data or algorithms—a complete ban is needed.

Reformists counter: the technology is neutral, the problem is in its application. They propose algorithm audits, transparency, and accountability. Abolitionists respond: audits cannot fix a fundamentally flawed concept.

The disagreement here is not about implementation details. This is a debate about whether a technical solution even exists for a problem that is inherently not technical, but conceptual.

🔬 The Accuracy Debate: Is 70% Sufficient for Making Decisions About People's Lives

Techno-optimists point to improving algorithm accuracy: modern systems achieve 70–80% accuracy in laboratory conditions. Critics respond: 70% accuracy means 30% errors, and when it comes to freedom, employment, or life, this is unacceptable.

Moreover, accuracy drops for minorities, creating systematic discrimination. The debate is unresolved, but consensus is shifting toward critics: even high average accuracy does not justify using technology that discriminates against vulnerable groups (S003).

- Average accuracy of 70–80% conceals variance across demographic groups

- A 30% error rate is unacceptable for decisions affecting lives

- Systematic error for minorities is not a technical problem, but a justice problem

📊 Regulatory Disagreements: Ban, Moratorium, or Industry Self-Regulation

The Fordham Law study recommends that legislators ban physiognomic AI in public spaces (S003, S004). Industry proposes self-regulation through ethical codes and voluntary standards.

Governments are considering intermediate options: moratoriums on certain applications, mandatory audits, transparency requirements. The disagreements reflect a conflict of interests: industry wants to preserve a profitable market, researchers and activists prioritize human rights.

- Ban

- Complete exclusion of physiognomic AI from public space. Position: the technology is inherently discriminatory.

- Moratorium

- Temporary suspension of deployment until standards are developed. Position: time is needed for regulation.

- Self-Regulation

- Industry ethical codes and voluntary standards. Position: the market will correct itself.

For citizens and legislators, it's important to understand: lack of consensus does not mean uncertainty. It means the decision will be made politically, not scientifically. The choice between ban, moratorium, and self-regulation is a choice between protecting human rights and industry interests.

Review the analysis of how modern algorithms repeat 19th-century errors, and the protocol for responsible AI development.

Cognitive Anatomy of the Myth: Which Psychological Triggers Make People Believe in Physiognomic AI

Physiognomic AI exploits deeply rooted cognitive biases. Understanding these mechanisms explains why discredited pseudoscience finds new adherents in the era of big data and machine learning. Learn more in the Psychology of Belief section.

⚠️ Essentialism: Why the Brain Wants to Believe That Appearance Reflects Essence

Psychological essentialism is the tendency to attribute an unchanging inner essence to people that determines their properties. The brain automatically seeks connections between appearance and character, simplifying social navigation.

Physiognomic AI exploits this tendency by offering technological confirmation of intuitive but false beliefs. People believe the algorithm will reveal a person's hidden essence because it aligns with how their own brain works.

🧠 Halo Effect: How First Impressions from Appearance Distort All Subsequent Judgments

The halo effect is a cognitive bias where a general impression of a person influences the evaluation of their specific qualities. Attractive people seem more intelligent, honest, and competent.

Physiognomic AI institutionalizes the halo effect: the algorithm learns from data where attractiveness correlates with success (attractive people are hired and promoted more often), and reproduces this discrimination as objective assessment.

🕳️ Illusion of Objectivity: Why Numbers and Algorithms Seem Impartial

People tend to trust quantitative assessments more than qualitative judgments. An algorithm outputs a number—73% probability of criminal behavior—and this number seems like an objective fact, not the result of a biased model trained on biased data.

- Numbers are perceived as facts, not interpretations

- Algorithms seem neutral, though they reflect data biases

- The illusion of objectivity masks discrimination as scientific precision (S003, S004)

🧷 Technological Determinism: Belief in the Inevitability and Neutrality of Progress

Technological determinism is the belief that technological development follows its own logic, independent of social values, and that progress is inevitable and beneficial. This ideology makes people accept physiognomic AI as an inevitable part of the future, instead of critically evaluating its consequences.

Determinism suppresses agency: if technology is inevitable, why resist? In reality, technological development results from human decisions, and these decisions can be changed. For more on the legacy we ignore, see the analysis of AI history and its social roots.

These four triggers work synergistically: essentialism creates readiness to believe, the halo effect provides emotional reinforcement, the illusion of objectivity masks bias, and determinism blocks critical resistance. Together they form a cognitive trap that's difficult to escape without conscious analysis of one's own biases.

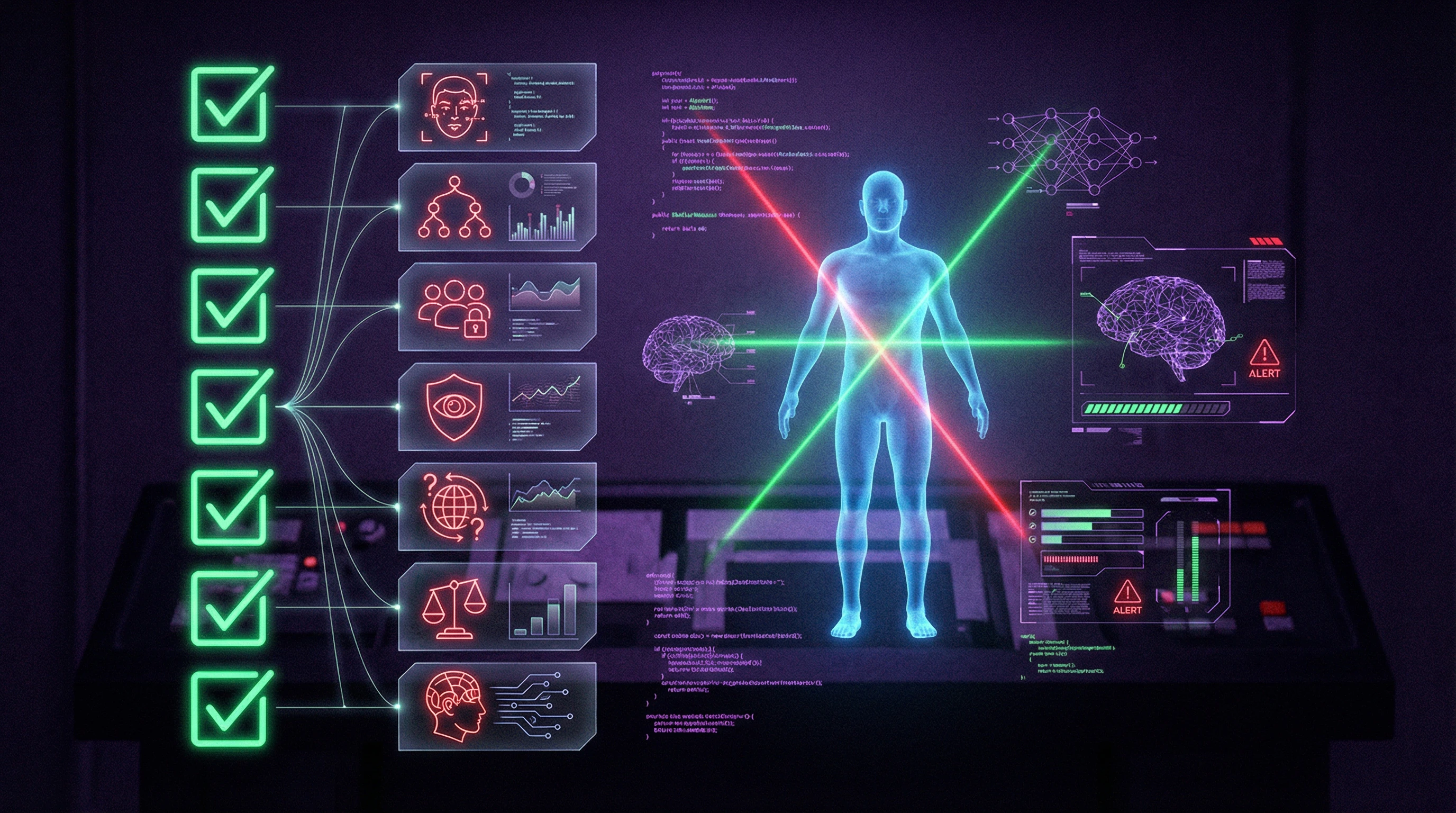

Verification Protocol: Seven Questions That Expose Physiognomic AI in Thirty Seconds

Research from Fordham Law proposes conceptualizing physiognomic AI and offering policy recommendations for regulation. Here are seven questions that expose a system in half a minute. More details in the Karma and Reincarnation section.

- Is the system trained on a representative sample or a convenience database? If on photos from the internet—that's already bias.

- Have the developers published error metrics by demographic groups? Silence = hiding the problem.

- Does the system predict behavior or only classify visual features? If the former—it's physiognomy; if the latter—it may be legitimate (but rarely).

- Is there independent verification or only internal company testing? External validation is mandatory.

- Is the system used to make decisions about people (hiring, credit, law enforcement) or only for analysis? The former is a red line.

- Do the people the system operates on know about it and can they object? If not—it's a transparency violation.

- Is there an appeals mechanism or review process for decisions made by the system? Absence = absence of fairness.

If the answer to four or more questions is "no" or "unknown"—you're looking at physiognomic AI disguised as objectivity. (S001), (S004)

Journalists can use this checklist when verifying company claims. Legislators—when developing standards. Citizens—when evaluating systems that affect their lives.

The trap of physiognomic AI is that it appears more objective than human judgment. In reality, it simply hides biases inside mathematics. Responsible AI use begins with asking these questions before system deployment, not after a scandal.

The protocol works because it attacks not the technology, but the logic of its application. Physiognomic AI falls not under pressure of criticism, but under pressure of transparency. Debunking begins with questions, not answers.