What biometric facial recognition means in legal and technical terms—and why it's not just a "photograph"

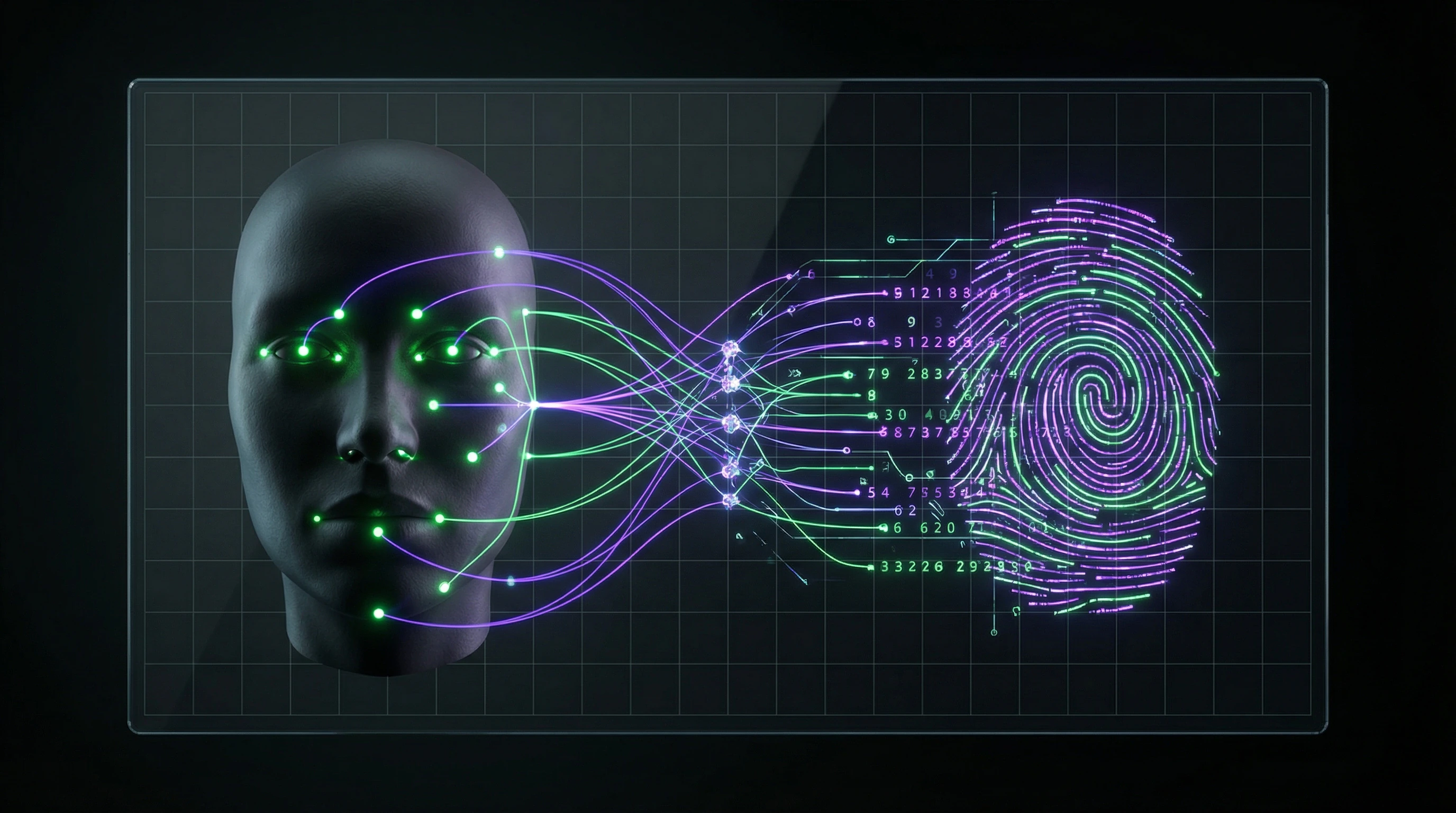

Biometric facial recognition is an automated technology for identifying individuals based on unique physiological characteristics of the face. Unlike a photograph, the system creates a mathematical model: a "biometric template" or "faceprint"—a set of numerical values describing distances between key facial points (S001).

The template contains the distance between pupils, nose width, eye socket depth, and jawline contour. This allows faces to be matched under different lighting conditions and angles, but creates a problem: a face cannot be hidden in public space, unlike fingerprints or iris patterns. More details in the Machine Learning Basics section.

📌 Technical architecture: four stages from frame to decision

- Detection

- The system separates the face from the background and other objects in the frame.

- Normalization

- Correction of tilt angle, scale, and lighting to standardize data.

- Feature extraction

- A neural network analyzes the image and creates a vector of 128 to 512 numbers (S001).

- Matching

- The resulting vector is compared against a database, calculating the degree of similarity. If it exceeds the threshold—a match is returned.

⚖️ Legal classification: why biometrics require enhanced protection

In international law, biometric data is a special category of personal data. GDPR classifies it as a "special category," whose processing is generally prohibited (S001).

Exceptions are only possible with explicit consent of the subject, protection of vital interests, or fulfillment of obligations in employment law. U.S. state laws also increasingly require written consent for processing biometric personal data.

🧱 Critical distinction: impossibility of recovery and replacement

If a password leaks—you change it. If a card is stolen—you block it. But a biometric facial template cannot be changed: a person cannot "change their face."

| Identifier | Can it be changed | Visibility in public space |

|---|---|---|

| Password | Yes, easily | Hidden |

| Bank card | Yes, reissue | Hidden |

| Fingerprint | No | Requires physical contact |

| Face (biometric) | No | Visible always and everywhere |

Once compromised, biometric data remains vulnerable throughout a person's lifetime. This creates unique risks that distinguish biometrics from all other forms of identification.

Seven Arguments from Facial Recognition Advocates — and Why They Sound Convincing

Before analyzing the risks, we must honestly examine the arguments of proponents. The technology wouldn't have achieved such widespread adoption if it didn't offer real advantages. Understanding the logic behind these arguments allows us to assess where legitimate benefits end and unjustified risks begin. More details in the Techno-Esotericism section.

🛡️ The Security Argument: Preventing Terrorist Attacks and Finding Criminals

Facial recognition systems enable real-time identification of wanted individuals, terrorists in databases, and missing children. Biometric identification happens instantly and doesn't require the subject's cooperation — unlike manual document checks, which can be defeated by forged documents.

Surveillance cameras equipped with recognition systems cover large areas — airports, train stations, stadiums — where manual control is physically impossible.

⚙️ The Efficiency Argument: Reducing Costs and Accelerating Processes

Automated identification radically reduces the time required for control procedures. At airports, passengers complete check-in, passport control, and boarding without presenting documents — a glance at the camera is sufficient.

In the banking sector, biometric identification accelerates account opening and transaction processing while reducing fraud risks. In corporate environments, access control systems eliminate the need for physical badges, which can be lost or transferred to third parties.

🧠 The Convenience Argument: Contactless Identification Without Additional Actions

Biometric recognition requires no active actions from the user — no need to retrieve documents, place a finger on a scanner, or enter passwords. This is especially important for people with disabilities (S002).

The technology works at a distance, requires no physical contact with devices, which is relevant in the context of epidemiological risks. For elderly people who struggle to remember passwords, biometric identification can be a more accessible authentication method.

- Requires no active user actions

- Works at a distance without physical contact

- Accessible for people with disabilities

- Reduces cognitive load (no need to remember passwords)

📊 The Accuracy Argument: Modern Systems Exceed Human Capabilities

Facial recognition algorithms based on deep neural networks achieve accuracy above 99% on standard test datasets, surpassing human ability to recognize faces in challenging conditions (S001).

Systems are not subject to fatigue, emotional states, or cognitive biases that affect human perception. They process thousands of faces per second and can recognize faces in poor lighting, partial occlusion, and age progression.

Algorithms solve tasks that humans handle poorly: recognition in suboptimal conditions, processing massive data streams, absence of subjective perception errors.

🔁 The Scalability Argument: Technology Works at City and National Levels

Facial recognition systems are deployed at the scale of entire cities, creating unified surveillance networks with the ability to track people's movements. This enables tasks unavailable to traditional methods: finding missing persons across a city in minutes, analyzing visitor flows in shopping centers, optimizing transportation routes.

The technology scales linearly: adding new cameras doesn't require a proportional increase in the number of operators.

💎 The Inevitability Argument: Technology Has Already Become Part of Infrastructure

Facial recognition is already integrated into critical infrastructure: banking systems, government services, transportation networks, corporate security. Abandoning the technology would require dismantling existing systems with enormous costs and reduced security levels.

The technology is developing globally: if one country refuses to use it, this won't stop development in other jurisdictions, but will create competitive disadvantages in security and governance.

🧭 The Regulability Argument: Technology Is Subject to Legal Control

Unlike anonymous surveillance, biometric identification systems create a digital trail that can be verified and controlled. Each identification event is recorded in system logs with time, location, and operator information.

This creates opportunities for auditing and accountability in cases of abuse. The technology can be configured with different accuracy levels and activation thresholds, allowing balance between security and privacy. Legal mechanisms, such as requiring a court warrant for database access, can limit arbitrary use of systems (S003).

- Digital Trail

- Every system action is recorded with metadata (time, location, operator), which theoretically allows auditing and identification of abuses.

- Configurable Parameters

- Accuracy levels and activation thresholds can be varied depending on the context of use and the required balance between security and privacy.

- Judicial Oversight

- Warrant requirements for database access create a formal barrier against arbitrary system use.

All seven arguments are based on real advantages of the technology. However, the persuasiveness of these arguments is often based on examining only one side of the equation — benefits — while ignoring or minimizing the risks that arise with mass deployment. AI ethics and safety require comprehensive analysis, not a choice between convenience and control.

Evidence Base: What Research Says About Accuracy, Errors, and Systematic Biases in Recognition Algorithms

Claims of high accuracy require critical examination: under what conditions were tests conducted, on what samples, what metrics were used. Special attention to systematic errors, where algorithms demonstrate predictably worse performance for certain population groups. More details in the Synthetic Media section.

📊 Accuracy Metrics: What's Hidden Behind "99% Accuracy"

Claims of 99% accuracy require understanding context. There are two modes: verification (1:1 comparison — is this the same person?) and identification (1:N search — who is this person among N records?). Verification is typically more accurate, as the system compares two templates.

In identification across a large database, the probability of false positives grows proportionally to database size (S011). If a system has a false positive rate of 0.1% (99.9% accuracy), then when checking against a database of 1 million records, it will produce 1,000 false matches.

Accuracy in laboratory conditions and accuracy in real-world deployment are two different metrics. The first measures potential, the second measures risk.

⚠️ Systematic Biases: Racial and Gender Prejudice

Multiple studies have identified significantly higher error rates when identifying people with darker skin, women, and elderly individuals (S012). The reason: training datasets contain predominantly images of middle-aged white men.

Neural networks perform worse at recognizing faces that differ from this "standard." Such biases create discrimination risks: people from underrepresented groups more frequently become victims of false accusations or cannot access services due to identification failures.

| Population Group | Typical Problem | Error Mechanism |

|---|---|---|

| People with dark skin | High false positive rate | Insufficient representation in training dataset |

| Women | Recognition errors with makeup | Algorithm trained on faces without makeup or with minimal makeup |

| Elderly people | Mismatch with younger photos in database | Age-related facial changes not accounted for in model |

🧪 Testing Conditions vs. Real-World Deployment

Laboratory tests are conducted on carefully prepared datasets: high-quality photographs, controlled lighting, frontal angles, no occlusions. In real conditions — on streets, in subways, airports — image quality is significantly lower.

Low camera resolution, poor lighting, arbitrary shooting angles, partial face obstruction by clothing or accessories result in real-world accuracy being substantially lower than claimed (S011). The gap between laboratory and field is not a technical detail, but a source of systematic errors in law enforcement.

- Laboratory conditions: controlled lighting, frontal angle, high resolution

- Field conditions: natural lighting, arbitrary angles, low resolution

- Result: accuracy drops 5–15% depending on system

- Legal consequence: accusations based on recognition require additional verification

🔎 The Problem of Appearance Changes: Makeup, Aging, Medical Conditions

Recognition systems are vulnerable to appearance changes. Makeup, especially contouring, can alter the perceived facial geometry so much that the algorithm fails to recognize the person. Natural aging changes features: skin loses elasticity, wrinkles appear, facial contours change.

- Makeup and Contouring

- Alters facial geometry in the algorithm's feature space. The system may not recognize a person who looks different from the original photo in the database. The problem is exacerbated when makeup is professionally applied.

- Natural Aging

- Wrinkles, changes in facial contours, loss of skin elasticity — all shift the biometric template. A person whose database photo was taken 10 years ago may not match current recognition.

- Medical Conditions

- Swelling, injuries, plastic surgery alter facial geometry. This creates the need for regular biometric template updates, complicating deployment and creating additional data breach risks.

The problem is compounded by people often not knowing about the need to update their biometric data. The system may deny access or produce a false match, leaving the person without explanation.

A biometric template is not a static identifier, like a fingerprint. It's a dynamic image that changes with age, health, and personal choices. Systems that ignore this reality create an illusion of accuracy, not accuracy itself.

The connection between these technical limitations and the return of physiognomy in the AI era becomes evident: algorithms repeat the errors of the 19th century, but with an appearance of scientific validity. For understanding ethical implications, see AI ethics and safety.

How Neural Networks Recognize Faces: Why the "Black Box" Creates Legal Problems

Understanding the technical mechanism is critical for assessing legal consequences. Modern systems are based on deep convolutional neural networks (CNN), trained on millions of facial images. More details in the Logical Fallacies section.

The decision-making process inside a neural network remains opaque even to developers—the "black box" problem, which creates fundamental difficulties for legal regulation.

🧠 Deep Neural Network Architecture: From Pixels to Decision

Recognition systems use architectures like FaceNet, VGGFace, ArcFace—deep neural networks with dozens or hundreds of layers. The first layers extract simple features: edges, corners, textures.

Middle layers combine these features into more complex patterns: eye shapes, nose, mouth. Deep layers create abstract representations that no longer have direct visual interpretation—multidimensional vectors in feature space (S001). The final layer transforms these vectors into a compact representation—a biometric template for comparison.

- Input layer: processing image pixels

- Layers 1–5: extracting local features (textures, edges)

- Layers 6–15: combining into regional patterns

- Layers 16–25: abstract facial representations

- Output layer: biometric template and match decision

⚙️ The Explainability Problem: Why Did the System Make This Decision

Critical problem: the impossibility of explaining why a specific decision was made. If the system identified a person as matching a database record, it's impossible to specify which facial features led to that conclusion.

The right to explanation of decisions made by automated systems is enshrined in GDPR (S002). But technically implementing it for deep neural networks is extremely difficult—this creates a gap between legal requirements and technical reality.

How can a person challenge a decision if it's impossible to understand its basis? This is a fundamental problem for fair judicial process.

🔁 Training on Data: Where Millions of Faces Come From

Training an effective system requires millions of labeled facial images. Where do they come from? Often from publicly available sources: social networks, photo banks, video recordings.

Many people don't know their photos are being used to train commercial systems. This violates personal data protection principles (S002). If the training dataset is unbalanced (few images of people from certain ethnic groups), this leads to systematic biases in system performance.

| Data Source | Volume | Problem |

|---|---|---|

| Social networks | Billions of photos | Lack of subject consent |

| Photo banks | Hundreds of millions | Unclear license usage |

| Video recordings (cameras) | Millions of frames | Mass surveillance without notification |

| Government databases | Tens of millions | Centralized control |

🧷 Adversarial Attacks: How to Fool Recognition Systems

Neural networks are vulnerable to adversarial attacks—specially constructed perturbations of input data that force the system to make incorrect decisions. For facial recognition, this could be special makeup, glasses with specific patterns, stickers on the face.

These perturbations are imperceptible to humans but radically change the neural network's output (S003). The existence of such attacks demonstrates that recognition systems are not reliable under adversarial conditions.

If an attacker knows how to fool the system, it loses its protective function. This is critical for security applications—the system becomes a tool that can be bypassed, not a reliable control mechanism.

Paradox: the "smarter" the system becomes, the more specific the methods to bypass it. This creates an arms race between security developers and those seeking vulnerabilities.

Conflict of Interest: Where Public Safety Meets Individual Privacy Rights

Biometric facial recognition sits at the epicenter of a fundamental conflict between collective security and individual freedom. On one hand, the state has a legitimate obligation to protect citizens from crime and terrorism. On the other hand, mass surveillance creates risks of abuse, suppression of dissent, and total control. More details in the Psychology of Belief section.

This conflict has no simple solution—it requires careful balancing of interests, where each decision favoring one side automatically weakens the other.

🕳️ Presumption of Guilt: When Everyone Becomes a Suspect

Mass biometric surveillance inverts the presumption of innocence. In traditional legal systems, a person is considered innocent until proven guilty, and surveillance begins after suspicion arises. Mass facial recognition systems work in reverse: every person in public space is automatically scanned and checked against databases.

This means everyone is treated as a potential criminal subject to verification. Such an approach fundamentally changes the relationship between citizen and state, creating an atmosphere of total distrust.

🧩 Chilling Effect: How Surveillance Suppresses Freedom of Expression

The awareness that your face is constantly being scanned and your movements tracked changes people's behavior. This phenomenon is called the "chilling effect": people avoid visiting certain places, participating in protests, meeting with certain individuals, fearing consequences.

Even if the system is used only to find criminals, the mere fact of its existence creates psychological pressure. This is especially dangerous for freedom of assembly and expression: if protest participants know their faces will be identified and recorded, many will refuse to participate (S001).

| Scenario | Without Mass Surveillance | With Mass Surveillance |

|---|---|---|

| Protest Participation | Decision based on convictions | Decision includes risk calculation of identification |

| Visiting Meeting Places | Location choice is free | Choice limited by fear of recording |

| Public Activity | Anonymity in crowds | Complete traceability |

🛡️ Protecting Vulnerable Groups: Children, Refugees, Victims of Violence

Certain categories of people are particularly vulnerable to the risks of biometric surveillance. Children cannot give informed consent for processing their biometric data, yet their faces enter recognition systems through school security systems or public surveillance.

- Refugees and Asylum Seekers

- May be identified and deported if their biometric data falls into the hands of authorities from the country they fled (S006).

- Victims of Domestic Violence

- Lose the possibility of anonymity if their faces can be found through recognition systems, making it easier for abusers to locate them (S008).

- Activists and Journalists

- Become vulnerable to targeted repression in countries with limited press freedom.

⚠️ Risks of Authoritarian Use: From Control to Repression

History shows that surveillance technologies created to fight crime can be used for political repression. Facial recognition systems allow identification of protest participants, tracking movements of opposition members, and creating profiles of political activity.

In authoritarian regimes, such systems become tools for suppressing dissent. Even in democratic countries, there's a risk of "function creep": systems initially created for narrow security purposes gradually expand their scope, covering more and more aspects of citizens' lives (S001).

Technology is neutral, but its application depends on political context. A tool that's safe in a democracy becomes a weapon in the hands of authoritarianism.

Related materials: AI Ethics and Safety: How to Develop and Use Responsibly and AI Physiognomy and the Return of Phrenology: Why Facial Recognition Algorithms Repeat 19th Century Mistakes.

International Standards for Biometric Data Protection: Requirements of GDPR, Convention 108+, and National Legislation

Legal regulation of facial recognition develops at several levels: international conventions, regional regulations (such as GDPR in the EU), national laws. These norms establish principles for processing biometric data, requirements for subject consent, and limitations on technology use. More details in the section Cosmology and Astronomy.

However, enforcement remains a problem: technology develops faster than legislation, and monitoring compliance is difficult.

📌 GDPR: Biometrics as a Special Category of Data

GDPR (European General Data Protection Regulation) classifies biometric data as a special category—on par with genetic information and health data (S001). This means a ban on processing without explicit subject consent, except in narrow cases (security, law enforcement, vital interests).

GDPR paradox: the regulation protects data but doesn't solve the problem of systematic algorithmic errors. Even with full procedural compliance, technology can discriminate based on gender, age, or ethnicity.

Article 9 of GDPR prohibits processing biometric data for identification in public spaces without special legislative acts. This creates a barrier to mass deployment of facial recognition in airports, streets, and shopping centers.

📌 Convention 108+: Global Minimum

Convention 108+ (Council of Europe) establishes an international standard for personal data protection, including biometrics. Its requirements are softer than GDPR but cover a broader range of countries (including Russia, Ukraine, Moldova).

- Principle of lawfulness: processing only based on law or consent

- Principle of proportionality: the purpose must justify the means

- Principle of transparency: the subject must know their data is being processed

- Right to access and correction: the subject can verify and challenge data

Convention 108+ doesn't prohibit facial recognition but requires each state to establish its own restrictions in national legislation.

📌 National Legislation: Fragmentation and Gaps

Different countries choose different approaches. France and Germany restrict facial recognition in public spaces. The US relies on sectoral regulation (biometric law in Illinois, surveillance laws in individual states).

| Jurisdiction | Approach | Restrictions |

|---|---|---|

| EU (GDPR) | Prohibitive | Facial recognition in public spaces requires legislation |

| USA | Sectoral | Depends on state and sector (police, commerce, fintech) |

| China | Permissive | Minimal restrictions, widespread use in social credit system |

| Russia | Mixed | Convention 108+, but national legislation is developing |

Fragmentation creates a problem: companies operating in multiple jurisdictions must comply with different standards. This slows innovation but protects citizens from unification under the softest standard.

The connection to AI ethics and security is revealed in a separate article. It also discusses how modern algorithms repeat the mistakes of the 19th century.

📌 Enforcement Problem: Technology Outpaces Law

Even strict laws (like GDPR) face control problems. How do you verify that a company isn't using facial recognition in background mode? How do you prove discrimination if the algorithm operates as a "black box"?

- Problem 1: Information Asymmetry

- Regulators don't have access to source code and training data of algorithms. Companies can hide errors and biases.

- Problem 2: Cross-Border Nature

- Data is processed in the cloud, servers are located in different countries. Which law applies? Who bears responsibility?

- Problem 3: Speed of Innovation

- New applications of facial recognition (deepfakes, synthetic videos) appear faster than legislators can respond. Deepfake detection is a separate problem.

Conclusion: international standards establish principles, but their implementation depends on political will and technical control capabilities. Without algorithm transparency and independent auditing, even the strictest laws remain on paper.