⚖️ AI Ethics

⚖️ AI EthicsEthical Principles and Safety of Artificial Intelligence in Medicineλ

Research on ethical standards, information security, and responsible application of AI technologies in clinical practice and medical research

Overview

The implementation of artificial intelligence in medicine requires strict adherence to ethical principles and safety protocols. AI systems for intraoperative parathyroid gland visualization, algorithms for analyzing breast cancer risk factors, and clinical decision support systems for age-related macular degeneration treatment demonstrate the technology's potential, but simultaneously raise questions of data privacy, algorithm transparency, and clinical accountability. Ethical frameworks must balance innovation with patient rights protection, ensuring that AI remains a support tool rather than a replacement for professional medical judgment.

🛡️ Laplace Protocol: All AI systems undergo multi-level verification for compliance with ethical standards, including assessment of algorithm transparency, personal data protection, clinical validation, and adherence to informed consent principles before implementation in practice.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

⚖️ AI Ethics

⚖️ AI Ethics ⚖️ AI Ethics

⚖️ AI Ethics ⚖️ AI Ethics

⚖️ AI Ethics ⚖️ AI Ethics

⚖️ AI Ethics ⚖️ AI Ethics

⚖️ AI Ethics ⚖️ AI Ethics

⚖️ AI Ethics⚡

Deep Dive

Ethical Principles of AI Application in Clinical Practice: Where the Algorithm Ends and the Physician Begins

The integration of artificial intelligence into medicine creates a fundamental ethical paradox: technology designed to improve quality of care may violate basic principles of medical ethics if the boundaries of its application are not considered. Systematic reviews of AI-assisted systems in surgery demonstrate that the technology is at the validation stage and cannot replace clinical expertise.

The key question is not "can AI" but "should AI" make decisions without human involvement in critical clinical situations.

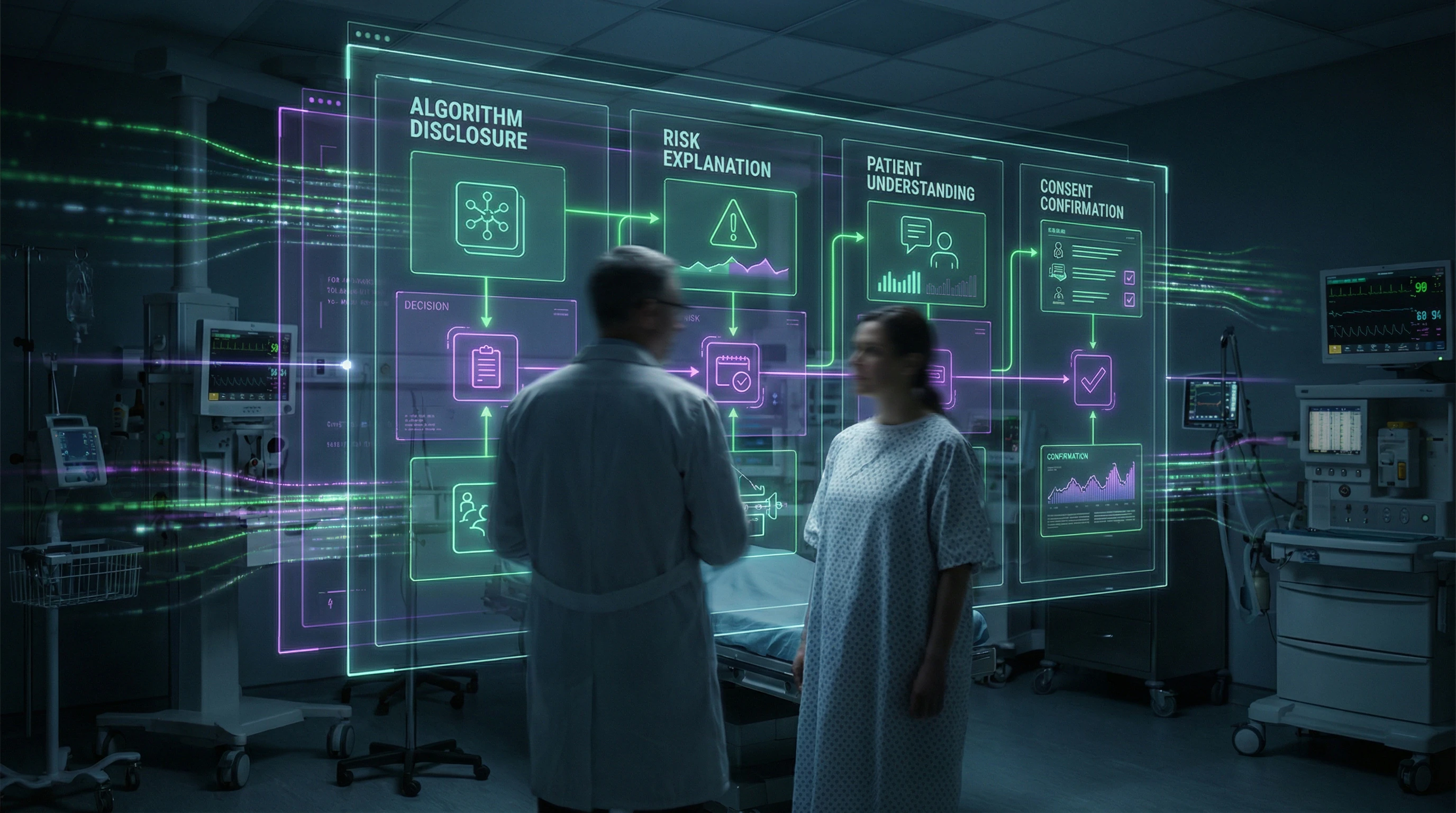

Patient Autonomy and Informed Consent in the Age of Algorithms

The traditional model of informed consent assumes that the patient understands the nature of the proposed intervention, but AI systems create a "black box" where the logic of decision-making remains opaque even to physicians. Studies of AI-assisted intraoperative imaging for parathyroid gland identification show that computer vision systems use complex algorithms whose mechanisms are not always explainable in clinical terms.

Patients have the right to know that a diagnostic or therapeutic decision is based on an algorithm, not solely on the physician's clinical judgment. Without this knowledge, patient autonomy—their ability to make informed decisions about their own health—is compromised.

- Consent Protocol

- Must include explanation of the AI system's role: whether it is an auxiliary tool or makes decisions autonomously, what the algorithm's accuracy is, what alternative methods exist.

- Clinical Significance

- Particularly high in the context of surgical interventions, where errors in identifying anatomical structures can lead to serious complications—for example, accidental removal of parathyroid glands with subsequent hypocalcemia or damage to the recurrent laryngeal nerve.

The physician remains responsible for the final decision, but the patient must understand on what basis this decision was made.

Transparency and Explainability of Algorithms: Requirement or Utopia

Modern AI systems, especially those based on deep learning, are multilayer neural networks with millions of parameters, making their decisions practically inexplicable in the traditional sense. A systematic review of AI-assisted parathyroid gland identification indicates the need to validate the diagnostic performance of these systems but does not reveal the mechanisms by which the algorithm recognizes tissue.

The physician must trust the tool without fully understanding how it works—a situation analogous to using complex medical equipment, but with a critical difference: AI influences clinical judgment, not just parameter measurement.

The practical solution lies in developing "explainable AI" (XAI)—systems that can provide clinically meaningful justification for their recommendations. For surgical applications, this might mean visualizing areas of the image that the algorithm identified as parathyroid tissue, with confidence levels indicated.

- Data on which the system was trained

- Performance metrics in validation studies

- Known limitations and cases of erroneous results

- Conditions under which the algorithm's recommendation may be unreliable

Without this information, the physician cannot critically evaluate the algorithm's recommendation and bears responsibility for decisions made based on opaque data.

Medical Data Security: Why Breaches Mean More Than Fines—They Cost Lives

Medical data represents the most sensitive category of personal information. Its compromise leads not only to privacy violations but to direct physical harm to patients.

AI systems require massive datasets for training and validation. Network meta-analyses of anti-VEGF therapy effectiveness for neovascular age-related macular degeneration combine data from multiple clinical trials to compare visual outcomes and safety profiles of different drugs. Each data point represents a real patient with a unique medical history.

| Breach Risk | Consequences |

|---|---|

| Insurance discrimination | Coverage denial, premium increases |

| Employment discrimination | Job rejection based on medical status |

| Targeted attacks | Physical harm, blackmail, extortion |

Protecting Patient Privacy: From De-identification to Differential Privacy

Traditional de-identification—removing direct identifiers (name, address, date of birth)—proves insufficient in the era of big data and machine learning.

Systematic reviews analyzing the relationship between body mass index and breast cancer risk by molecular subtypes use data that includes demographic characteristics, menopausal status, hormone receptor status, and HER2 status. The combination of these characteristics can be unique and allow re-identification even with names removed. The clinical significance of understanding differential BMI effects on various cancer subtypes requires detailed data—creating tension between scientific value and privacy protection.

Differential privacy is a mathematical method that adds controlled "noise" to data such that statistical conclusions remain valid, but individual records cannot be recovered.

For training AI systems to identify parathyroid glands, this means the algorithm can learn from real surgical images without the ability to link a specific image to a specific patient.

Federated learning is another approach where the model trains locally on each medical institution's data, and only model parameter updates—not the data itself—are transmitted centrally. These technologies aren't absolute protection, but they significantly raise the complexity threshold for potential attacks.

Encryption Protocols and Access Controls: Technical Barriers Against Human Error

Most medical data breaches occur not from technical vulnerabilities but from human factors: phishing attacks, weak passwords, unauthorized insider access.

Network meta-analyses comparing the effectiveness of different anti-VEGF agents (aflibercept, ranibizumab, bevacizumab, brolucizumab, faricimab) require access to detailed clinical trial data often stored in distributed systems. Each access point is a potential vulnerability.

- Principle of least privilege: each user receives access only to necessary data and only for the duration of a specific task

- End-to-end encryption of data at rest and in transit

- Multi-factor authentication for all users

- Audit of all data access with automatic detection of anomalous access patterns

- Regular penetration testing

- Mandatory staff training in information security fundamentals

For AI systems working with medical images, storage-level encryption is critical. Intraoperative images of parathyroid glands contain not only the target anatomical structure but surrounding tissues that could potentially identify the patient.

Data protection technology evolves more slowly than data utilization technology—this gap creates a vulnerability window that grows with each new AI application in clinical practice.

AI as a Surgical Decision Support Tool: Assistant, Not Autopilot

A fundamental misconception in discussions about AI in surgery is the notion that technology can or should replace the surgeon. AI-assisted intraoperative imaging systems for parathyroid gland identification are positioned as supportive tools currently undergoing validation.

The clinical need for such systems stems from objective complexity: accidental removal or damage to parathyroid glands leads to hypocalcemia, while misidentification increases the risk of recurrent laryngeal nerve injury. AI does not make the decision "to remove or not to remove"—it provides additional information for the surgeon to make that decision.

Intraoperative Parathyroid Gland Identification: Where Computer Vision Exceeds Human Capability

Computer vision systems analyze intraoperative images in real-time using algorithms trained on thousands of annotated surgical images. AI's advantage lies in its ability to process multiple visual features simultaneously: color, texture, vascularization, and position relative to other structures.

The human eye may miss subtle differences, especially with atypical gland location or altered anatomy from previous surgeries. AI compares the current image against an extensive database of reference cases, expanding the diagnostic spectrum.

| Parameter | AI Capability | Limitation |

|---|---|---|

| Parathyroid gland size | Real-time detection of 3–8 mm structures | Rare anatomical variants may be absent from training dataset |

| Visual features | Simultaneous analysis of color, texture, vascularization | Similarity to lymph nodes, adipose tissue, thyroid tissue |

| Clinical context | Image-based recommendation provision | No access to preoperative data, laboratory values, medical history |

The system's diagnostic performance is not a replacement for clinical judgment, but an extension of it. Only the surgeon can integrate AI information with full clinical context: preoperative imaging data, parathyroid hormone laboratory values, and intraoperative findings.

Boundaries of Automation and the Surgeon's Role: Why Humans Always Have the Final Word

Automation in surgery has strict limits, determined not only by technological constraints but also by ethical and legal frameworks. The technology is in the validation stage, meaning it is not ready for autonomous application without supervision by an experienced specialist.

A surgical decision is not merely the identification of an anatomical structure, but an assessment of the risks and benefits of a specific action for a specific patient in a specific clinical situation. AI lacks access to the full context: medical history, patient preferences, comorbidities, and social factors that may influence outcomes.

The optimal model is "human-in-the-loop": AI provides a recommendation with a confidence level, the surgeon critically evaluates this recommendation and makes the final decision.

- The system highlights a suspicious structure on screen with a confidence level indicator.

- The surgeon visually confirms the finding, evaluating it within the context of surrounding anatomy.

- The surgeon makes a decision about further actions based on full clinical context.

- Legal responsibility for the surgical outcome rests with the surgeon, not the algorithm.

AI is a tool that extends the surgeon's capabilities but does not replace their expertise, experience, and capacity for clinical judgment under uncertainty.

Accountability and Responsibility in AI Systems: Who's Liable When the Algorithm Gets It Wrong

Distribution of Responsibility Between Developers and Clinicians

The legal framework for liability in medical AI systems remains fragmented: developers bear responsibility for product defects, while clinicians are accountable for clinical decisions made using that product.

For computer vision systems in parathyroid surgery, this means: if the algorithm misses a gland due to a technical error, liability may rest with the manufacturer; if a surgeon ignores a correct warning or blindly follows a false-positive signal without clinical verification, responsibility shifts to them.

When a system issues a recommendation without explaining its logic, clinicians cannot assess its reliability—this creates an ethical dilemma: trust an opaque algorithm or rely solely on their own experience.

Regulatory agencies (FDA, EMA) require validation on independent datasets and post-market surveillance, but standards for "sufficient transparency" in clinical applications are still evolving.

Legal Aspects of Medical Errors When Using AI

Case law on AI-assisted medical errors is virtually nonexistent, creating legal uncertainty for all stakeholders.

| Error Scenario | Question for Court | Responsible Party |

|---|---|---|

| Algorithm failed to identify parathyroid gland | Technical system defect? | Manufacturer |

| Surgeon did not complete AI system training | Inadequate preparation? | Institution |

| Surgeon ignored system warning | Clinical negligence? | Surgeon |

The doctrine of informed consent requires reconsideration: should patients be notified that an AI system is being used in their surgery, what are its accuracy metrics, and do they have the right to refuse its use.

Insurance companies are beginning to include AI-assisted procedures in professional liability policies, but premiums and coverage terms vary widely, reflecting the uncertainty of risks.

FIG_02: Liability Distribution Model in AI-Assisted Surgery

┌─────────────────────────────────────────────────────────────┐ │ LIABILITY LEVEL │ PARTY │ RISK TYPE │ ├─────────────────────────────────────────────────────────────┤ │ Technical algorithm defect │ Developer │ Product │ │ Insufficient validation │ Regulator │ Regulatory │ │ Incorrect interpretation │ Clinician │ Clinical │ │ Lack of staff training │ Institution│ Institutional│ │ Ignoring warnings │ Surgeon │ Professional│ └─────────────────────────────────────────────────────────────┘

Systematic Reviews and Meta-Analyses: Ethics of Data Synthesis in the Age of Information Abundance

Minimizing Publication Bias and Selective Outcome Reporting

Publication bias — the systematic distortion where studies with positive results are published more frequently than those with negative or null findings — remains the primary threat to meta-analysis validity.

For a systematic review on anti-VEGF therapy for neovascular age-related macular degeneration, this is critical: if studies showing no differences between drugs remain unpublished, network meta-analysis will overestimate the effectiveness of some agents relative to others.

Detection methods (funnel plots, Egger's test, trim-and-fill analysis) have limited sensitivity with small numbers of studies — this is not a bug, but a fundamental limitation of small-sample statistics.

Ethical protocol for systematic review authors:

- Search for unpublished data through clinical trial registries (ClinicalTrials.gov, EudraCT).

- Contact original study authors to obtain unpublished results.

- Explicitly discuss the potential impact of publication bias on conclusions.

Transparency in Study Selection Methodology and Subgroup Analysis

Pre-registration of systematic review protocols (PROSPERO for medical reviews) establishes inclusion criteria, search strategy, and analysis plan before work begins.

For a review on the association between BMI and breast cancer risk, this means: authors must determine in advance whether they will analyze subgroups by menopausal status and molecular subtypes, or whether these analyses will be exploratory.

Post-hoc subgroup analyses without prior specification dramatically increase the risk of false-positive findings (p-hacking) and should be interpreted as hypothesis-generating rather than confirmatory.

Reporting should follow PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) standards:

- Complete flow diagram of study selection.

- Tables of characteristics for included and excluded studies.

- Risk of bias assessment for each study using validated tools (Cochrane RoB 2, ROBINS-I).

The Future of AI Ethics in Personalized Medicine: From Equal Access to Fair Algorithms

Equity of Access to AI Technologies and the Digital Divide

The implementation of AI systems in clinical practice deepens existing healthcare inequalities: technologies concentrate in large academic centers in developed countries, leaving peripheral and low-resource facilities without access. For AI-assisted parathyroid gland identification, this means a gap in quality of care—surgeons in well-equipped clinics gain a tool that reduces complication risk, while their colleagues in regional hospitals work without this support.

Economic barriers: high licensing costs, need for specialized equipment (high-resolution cameras, computational power), and staff training. An ethical response requires developing open-source solutions, subsidizing implementation in low-resource settings, and incorporating equitable access criteria into regulatory assessment.

Equity in AI medicine is not charity, but a condition for the technology's validity itself. A system that works only for wealthy centers doesn't solve the clinical problem—it reproduces social inequality.

Preventing Algorithmic Discrimination and Ensuring Data Representativeness

Algorithmic bias arises when training data is not representative of the population on which the system will be applied. If an AI model for parathyroid gland identification is trained predominantly on images from patients of European descent, its accuracy may be lower in patients of other ethnic groups due to differences in anatomy, tissue pigmentation, or comorbid pathology.

Systematic reviews must assess the demographic composition of participants in included studies and explicitly discuss limitations in generalizability of results. For meta-analysis of anti-VEGF therapy, it's critical to account for efficacy and safety differences between populations due to genetic factors, comorbidity patterns, and access to monitoring.

| Verification Level | What to Assess | Red Flag |

|---|---|---|

| Training Data | Demographic composition, geographic origin of samples | More than 80% from one ethnic group or region |

| Validation | Accuracy by subgroups (age, sex, ethnicity, comorbidity) | Accuracy variance >5% between subgroups |

| Regulation | Explicit statement of applicability limitations in instructions | Absence of fairness analysis in documentation |

Regulatory requirements are evolving toward mandatory fairness assessment: developers must demonstrate that the system works with comparable accuracy for all relevant subgroups, or explicitly state applicability limitations.

An algorithm that works well on average but poorly for a minority is not progress—it's systematized error. Fairness is not an addition to validity, but part of it.

FIG_03: Principles of Ethical AI Implementation in Medicine

┌──────────────────────────────────────────────────────────────┐

│ PRINCIPLE │ IMPLEMENTATION │ METRIC │

├──────────────────────────────────────────────────────────────┤

│ Transparency │ Explainable AI │ SHAP values │

│ Fairness │ Diverse datasets │ Equity metrics│

│ Accountability │ Audit trails │ Decision logs │

│ Safety │ Validation studies │ AUC, NPV, PPV │

│ Privacy │ Federated learning │ Privacy budget│

│ Human Control │ Human-in-the-loop │ Override rate │

└──────────────────────────────────────────────────────────────┘

↓

CLINICAL BENEFIT > TECHNOLOGICAL RISK

Knowledge Access Protocol

FAQ

Frequently Asked Questions

AI ethics in medicine is a system of principles governing the development and application of artificial intelligence in clinical practice. It includes patient autonomy, algorithm transparency, equitable access, and protection of personal data. These principles ensure safe technology implementation while maintaining physician accountability.

AI computer vision systems analyze intraoperative images in real-time, helping surgeons recognize parathyroid glands. This reduces the risk of accidental damage or removal of the glands, preventing hypocalcemia. The technology remains an assistive tool—the surgeon makes the final decision.

Yes, informed consent is mandatory when using AI systems in diagnosis and treatment. Patients must understand that algorithms participate in decision-making, what data is processed, and what the risks are. This aligns with the principle of autonomy and medical ethics requirements.

No, this is a common myth—AI doesn't replace doctors but complements their work. Algorithms analyze data and suggest options, but clinical thinking, empathy, and accountability remain with the specialist. Systematic reviews confirm that AI is effective only as a decision support tool.

Protection is ensured through encryption protocols, access controls, and anonymization of patient personal data. Systems must comply with information security requirements and data protection legislation. Regular audits and certification confirm technology reliability.

Responsibility is distributed among algorithm developers, the medical institution, and the treating physician. The physician bears primary clinical responsibility, as they make the final decision based on AI recommendations. Legal aspects require clear documentation of the decision-making process.

Transparency means that doctors and patients can understand how an AI system arrived at a specific conclusion or recommendation. Explainable algorithms show key factors influencing the decision, which increases trust. This is critically important for clinical practice and ethical acceptability of technologies.

Assess the transparency of study selection methodology, presence of a protocol, and publication bias analysis. A quality meta-analysis specifies inclusion criteria, search databases, and statistical methods. Systematic reviews from PubMed typically undergo rigorous peer review.

Yes, algorithmic discrimination is possible if training data contains bias by gender, age, ethnicity, or social status. This leads to unequal quality of diagnosis and treatment for different groups. Prevention requires diverse datasets and regular fairness audits of algorithms.

No, this is a misconception—AI accuracy depends on data quality, context, and task type. In some narrow areas (such as image analysis), algorithms show high accuracy but fall short in comprehensive assessment. Optimal results are achieved through collaborative work between physician and AI.

Equity requires technology accessibility for all healthcare facilities regardless of region and funding. Government support programs, standardization, and specialist training are necessary. This prevents digital inequality in healthcare quality between urban and rural areas.

Publication bias occurs when studies with positive results are published more frequently than those with negative or neutral findings. This distorts meta-analysis conclusions and inflates intervention effectiveness. Minimization requires searching for unpublished data and statistical tests for bias.

No, critical life-and-death decisions must remain with physicians, guided by ethical principles and human judgment. AI can provide prognostic information but lacks the moral standing for such decisions. Automation in critical situations is ethically unacceptable.

AI transforms the surgeon's role from executor to supervisor of high-tech systems while maintaining ultimate control. Algorithms assist in structure identification and planning, but manual skills and clinical reasoning remain irreplaceable. This requires new competencies in working with digital tools.

Opaque algorithms ("black boxes") make error detection difficult, reduce physician and patient trust, and create legal risks. The inability to explain decisions impedes clinical learning and recommendation verification. This can lead to incorrect treatment and ethical violations.

Ethics will focus on equitable access to personalized technologies, genomic data protection, and prevention of genetic discrimination. New regulatory frameworks will be needed to balance innovation and patient rights. International collaboration will be key to developing universal standards.